Practical Guidance for Engaging End-Users and Experts in Developing Scientific Tools

Links

- Document: Report (1.83 MB pdf) , HTML , XML

- Data Release: USGS data release - Survey Responses Collected in 2024 Measuring End-users' and Experts' Experiences Being Engaged in Development of Scientific Tools

- Download citation as: RIS | Dublin Core

Acknowledgments

Thank you to the Biological Threats and Invasive Species Research Program for proposing and investing in this project and supporting the engagement and coproduction of science tools. In addition, thank you to the many project partners who responded to our survey and project leads who participated in this study and provided thoughtful and insightful lessons learned from their experiences. Special thanks to Aparna Bamzai-Dodson and Ella Samuel for reviewing and providing feedback on this report.

Abstract

This report provides actionable guidance for scientists developing scientific tools that inform on-the-ground decision making. Scientific tools, in the context of this report, are technology or protocols that help practitioners collect and analyze their own data, and information products and web tools that practitioners could use to inform decisions. Engaging end-users and fellow experts is fundamental to the creation of useful scientific tools. Scientists can use clear and specific direction on action steps and activities to effectively engage with end-users and fellow experts during development. Our study explores lessons learned from six U.S. Geological Survey projects that designed and implemented engagement activities with end-users and experts to coproduce scientific tools for natural resource managers. U.S. Geological Survey teams engaged end-users and experts across the United States from Federal, State, and local governments; universities; Tribes; territories; and nongovernmental organizations in designing and developing scientific tools intended to support end-users in their work. An online survey with 98 participants measured satisfaction across several indicators of successful engagement, including engagement activity frequency, sufficient opportunities to provide feedback, feedback implementation, inclusion of necessary perspectives, and functionality of the tool for end-users. Semistructured interviews were held with project leads, during which the project leads reviewed a summary of the survey results. The project leads reflected on the engagement efforts used in their project, then described lessons learned from the engagement experience and participant feedback. Common themes for ensuring effective engagement identified through thematic analysis included engaging end-users during product conceptualization; establishing clear roles and expectations; considering who end-users are and how end-users may use the tool; recruiting participants through your network, boundary spanners, and leadership; understanding individual use cases; communicating how feedback was integrated into the product; and strategically using virtual meeting tools. This guide shares practical steps and exercises for planning and facilitating effective engagement based on lessons learned from project leads and case study summaries of each project.

Introduction

Scientists developing tools and information products1 for on-the-ground applications can use guidance on the best practices and strategies to effectively engage end-users and fellow experts during the development process. Attention to these efforts typically focuses on research (for example, coproduced scientific findings that can be used by a practitioner [Beier and others, 2017]) or action (for example, community engagement or deliberation that incorporates perspectives of all parties who may be affected by the decision-making process [Reed and others, 2018]). The coproduction process, in which researchers and practitioners work together to produce science useful for decision making, is a common goal of conservation scientists striving to produce actionable scientific research findings (Bamzai-Dodson and others, 2021). Though information provided by coproduced research is valuable, scientists’ other products and resources (such as technology, tools, online interfaces, and protocols) enable conservation practitioners to ask and answer scientific questions in their work context. These resources either directly inform management decisions or enable other scientists and practitioners to collect or analyze data for their own research that informs management decisions. Coproduced research tools and resources that are developed to inform management are distinct from research that produces findings with the primary aim of advancing broad scientific knowledge. Coproduction is also broader than engagement that produces decision-support tools, which are software systems or processes that assist the user in solving complex problems by producing decision-relevant information (Geoffrion, 1983; Pearman and Cravens, 2022). This report provides actionable guidance for engaging end-users and other experts developing scientific tools beyond the domain of research. End-user and expert engagement and the requisite activities facilitating that engagement are fundamental to creating useful scientific tools.

All bolded terms are common terms used throughout this report, and the definitions can be found in the “Glossary” section.

For this guide, we define “scientific tools” as web tools, scientific protocols, or information products that support research or inform decisions for a natural resource management issue, often used across geographies. The term “web tool” refers to a scientific tool that has a web-based interface (with one or more components), which the user interacts with to learn or create new information. A “scientific protocol” is defined as a scientific tool that provides instructive, step-by-step guidance on specific methods of data collection, analysis, or interpretation. Scientific protocols may include technology, machines, or physical equipment. Finally, “information products” are static information providing general guidance about scientific topics, methods, or findings that answer a specific research question for on-the-ground applications. These products can be web-based or more traditional reports and journal articles. The categories used throughout this report are based on the project leads’ descriptions of their tools. We define the terms “end-user” as the person who directly interacts with a scientific tool and uses the knowledge from the tool to inform their work, and “expert” as a person with subject matter expertise in the content, method, or scientific discipline upon which a scientific tool is based.

Knowledge exchange and other forms of transdisciplinary collaboration and engagement are used to effectively address complex conservation threats that span across disciplinary, social, geographic, and ecological boundaries (Margules and others, 2020). Terminologies, frameworks, and epistemological perspectives related to knowledge exchange abound, such as stakeholder engagement (Reed and others, 2018), public participation (Parkins and Mitchell, 2005), coproduction (Beier and others, 2017), transdisciplinary science (Steger and others, 2021), collaborative conservation (Wilkins and others, 2021), social learning (Schusler and others, 2003), translational ecology (Schlesinger, 2010), and participatory science (Cvitanovic and others, 2019). Some principles of research coproduction are relevant to scientific tool development, such as representation, which stipulates that a project team should “systematically represent research user knowledge needs and priorities” (Reed and others, 2014, p. 341). Other practices are less relevant, such as engaging users in developing research questions or study designs (Reed and others, 2014; Meadow and others, 2015). Existing guidance on engaging end-users to produce actionable science describes types of engagement and specific approaches and activities for scientists to facilitate effective engagement (Bamzai-Dodson and others, 2021). Other research focuses on barriers to producing actionable science (Pearman and Cravens, 2022), learning from decision-support tool design and development (Stoltz and others, 2023), applying translational ecology for invasive species management (Morelli and others, 2021), coproducing actionable science (Beier and others, 2017), and finding the best practices for general stakeholder engagement (Reed, 2008; Reed and others, 2018). These studies contribute to a foundation of principles and practices for useful and actionable science. This type of guidance is particularly relevant to organizations like the U.S. Geological Survey (USGS), which “monitors, analyzes, and predicts current and evolving Earth-system interactions and delivers actionable information at scales and timeframes relevant to decision makers” (USGS, 2037). This report provides scientists with guidance on specific processes and activities that (1) facilitate engagement in scientific tool development and (2) address issues such as usability and product design.

The intended audience of this guide is scientists or project teams creating geographically agnostic web tools, scientific protocols, or information products used by land and resource managers. In other words, the tools can be used at multiple scales and geographies and likely require engagement at a large scale, such as nationally, instead of one specific community or geographic place. These tools typically support research or inform decisions for a natural resource management issue, though these tools may also be applied in other contexts or more narrow geographic areas.

Methods Used to Inform This Guide

This guide was informed by a mixed qualitative-quantitative study of six projects led by USGS scientists to produce scientific tools. There were six total projects: 2 projects produced a web tool, 2 produced a scientific protocol, and 2 produced an information product. During one or more years, each project facilitated engagement activities with potential end-users of the intended scientific tool or experts in a topical area related to the tool (hereafter collectively referred to as “participants”). These six projects were chosen because these projects were part of a broader national initiative about invasive species, which required project teams to plan, implement, and evaluate end-user engagement. As a result, an engagement coordinator, the first author of this report, was brought on to the initiative to coordinate and advise engagement activities and evaluate project teams’ engagement efforts using a structured survey. This survey provided the quantitative data for this study. Subsequently, the first author led semistructured interviews with project leads to gather lessons learned from their experiences. These interviews provided the qualitative data for this study. The following two subsections detail the methodology for the survey and semistructured interviews. The guidance and practical steps outlined throughout this report are based on an analysis of the interviews with project leads.

Summaries of each project and engagement efforts are provided in appendixes 1–3. We use the term “project lead” to refer to the person or people, typically scientists, who are the primary decisionmakers or points of contact for a project. We use the term “project team” to refer to the larger group of people, typically scientists, working to develop a scientific tool. As part of their engagement efforts, each project team systematically recruited and facilitated engagement activities with participants. Project leads across all six projects typically used the term “partner” for project participants, which is reflected in this report’s exemplary quotations. The lead author on this guide assisted in coordinating and advising, but not facilitating, engagement activities across the six projects and surveyed participants to evaluate the engagement process.

Survey Methodology

We developed the survey using existing research on coproduction and stakeholder engagement to produce actionable science in the contexts of natural resource management and medical research (Wall and others, 2017; Bamzai-Dodson and others, 2021; Bamzai-Dodson and McPherson, 2022; Meadow and Owen, 2021). Each question measured a specific indicator of successful engagement identified in the research on coproduction of actionable science (Lavallee and others, 2012; Ray and Miller, 2017; Wall and others, 2017). The indicators we chose to measure in the survey and the corresponding survey questions are summarized in table 1, and the full questionnaire is available in appendix 4.

Table 1.

Summary of survey questions evaluating participants’ experiences engaging or collaborating on projects to develop scientific tools.[N/A, not applicable]

| Indicators | Survey questions and statements | Response options |

|---|---|---|

| Participants perceive they had equitable opportunities to participate in the project (such as, meetings and workshops; adapted from Wall and others, 2017, p. 102) | The project team has provided me with sufficient opportunities to inform the project. | 7-point scale: Strongly disagree to strongly agree |

| Trust—“Stakeholders are confident that project outcomes reflect the discussions and decisions reached through a deliberative process” (Lavallee and others, 2012, p. 401) | I trust that the project team has considered the feedback I have given. | 7-point agreement scale: Strongly disagree to strongly agree |

| The participant group is diverse and representative of key perspectives (adapted from the criteria termed “legitimacy” in Lavallee and others, 2012, p. 401) | The project team is engaging partners with the necessary subject matter expertise and management perspectives to inform the project. | 7-point agreement scale: Strongly disagree to strongly agree |

| Frequency and medium of communication between the project team and participants (adapted from Wall and others, 2017, p. 102) | For each of the following types of interactions that you have participated in, what

is your perception of the frequency of this type of interaction? • Emails • One-on-one calls or meetings • Presentations (for example, updates or webinars about the project) • Virtual group meetings • In-person interactions • Other |

5-point scale: • Far too little, • Slightly too little, • The right amount, • Slightly too much, • Far too much, or • N/A if you did not participate in this type of interaction. |

| Level of engagement in the project (question design informed by Bamzai-Dodson and others, 2021) | Which of the following best describes your involvement in this project? | • I am informed about the project’s progress, final products, and outputs. • I am consulted for feedback on certain aspects of the project, such as analyses, design, implementation, products, and outputs. • I collaborate with the project team to formulate solutions and design final products and outputs. • I am a coequal to the rest of the project team; I provide foundational input and recommendations to the project development. |

| Findings or outputs meet the standard that the participants apply to usable information for action (adapted from Wall and others, 2017, p. 102) | How functional do you believe the scientific tool(s) or product(s) this project is producing will be to support your invasive species work? | • Not at all (not well-developed at all; not functional). • Minimal (very limited in scope, scale, or function). • Moderate (generally functional with notable insufficiencies or limitations). • Good (gaps may exist for minor elements). • Robust (well-developed and highly functional). |

| “[Participants] are satisfied with the level of engagement” (Wall and others, 2017, p. 102) | How do you feel about your experience with this project team? | 7-point scale: Extremely dissatisfied to extremely satisfied |

The questionnaire consisted of 17 questions (and followed the requirements for the Department of the Interior Programmatic Clearance for Customer Satisfaction Surveys) and was approved through the requirements of the Office of Management and Budget (control number 1040-0001).

We prioritized indicators based on how actionable the feedback about that indicator would be for project leads. For example, learning whether participants would like fewer or more frequent virtual group meetings could easily be interpreted and acted upon. In addition, project teams were consulted on what types of questions would provide the most useful information. This consultation resulted, for example, in adding a question asking participants how functional the projects’ deliverables would be for their work and what engagement level participants perceived they were involved in. Furthermore, the survey served as a formative evaluation because most projects were ongoing and had not released final products.

The survey was pretested using Qualtrics survey software by eight individuals with knowledge about the projects or expertise in stakeholder engagement or coproduction research. Feedback from this pretest group was incorporated, including suggestions and clarifications about wording in the questions, answer choices, and survey introductions. This feedback was followed by a review of the survey questions by nine project leads on the projects whose engaged participants comprised the sample population for the survey, as well as a USGS employee who oversees the projects. This final review resulted in two minor edits to wording to improve clarity, but otherwise, the survey was approved by all project leads.

The sample population consisted of 178 individuals who were invested in the outcomes of the projects but not part of the core USGS project teams. As part of the engagement efforts across the six projects, project leads designed engagement plans and kept up-to-date lists of engagement activity participants. Project leads were also informed of the survey and the need for participants’ contact information in advance. Some participants were listed in multiple projects. To address the fact that some participants were listed in multiple projects, the first question of the questionnaire read, “For the purpose of this survey, we are asking that you respond based on your experience with just one project, even if you have been involved in multiple. Please select the project with which you have been the most involved [original emphasis]. Use this project as the basis for your answers for the rest of the questions in this survey.”

Following the best practices for web-based survey research (Dillman and others, 2014), two reminder emails were sent to the distribution list after the initial survey distribution on April 30, 2024, using Qualtrics survey software. The survey closed June 19, 2024. Website data showed that 113 people accessed the introduction page and advanced at least to the survey’s first question. Out of these respondents, 15 answered too few questions to be included in the analysis. Another 7 did not finish the survey but provided responses to several questions included in the analysis. There were 91 respondents who finished the survey, which resulted in a response rate of 63.48 percent and a completion rate of 80.53 percent. In total, there were 98 responses representing Federal (47), academic (23), State (8), nongovernmental (7), Tribal (2), and private (2) organizations.

Semistructured Interviews

After closing the online survey and analyzing results, the lead author led a series of semistructured interviews in September and October 2024 with the 10 project leads across the 6 projects included in the survey (table 2).

Table 2.

Summary of the number of projects and project leads interviewed for each type of scientific tool included in the study.Semistructured interviews were administered according to the best ethical practices for research on human subjects, including informed consent. Interviewees were first invited to participate in an interview. At the start of the interview, interviewees were told how the data would be analyzed and reported, and that interviewees could decline or stop the interview at any time. All interviewees consented to participating in a recorded interview. For 4 of the projects (2 web tools and 2 information products), 2 project leads were interviewed about each project. For the 2 projects developing scientific protocols, 1 project lead was interviewed about each project, for a total of 10 project leads interviewed. All interviewees were interviewed separately, even if an interviewee worked on the same project as another interviewee. All interviewees were asked 13 questions in total. The interview questions were designed to directly inform this end-user engagement guide and were based on successful engagement indicators measured through the questionnaire, such as the frequency of interaction and communicating to participants how feedback was incorporated into the product. Interviewees were also asked about the product itself, how the project team recruited and engaged participants, and what interviewees would do differently. After a series of questions on these topics, the lead author presented the project leads with the survey results from their specific project’s participants. Then, the interviewees were asked several more questions, including what the interviewees would do differently after reviewing participant feedback and what lessons learned interviewees would share about participant engagement with a fellow scientist for a similar project. The complete interview guide can be found in appendix 5.

Interviews were recorded, with consent from the interviewees, and transcribed by the lead author. The shortest and longest interviews were 52 and 98 minutes, respectively, with an average length of 70 minutes. The lead author then assessed the data through a thematic analysis of the transcripts, a method for identifying and reporting data themes, following six phases of analysis outlined by Braun and Clarke (2006, p. 87): (1) “familiarizing yourself with the data,” (2) “generating initial codes,” (3) “searching for themes,” (4) “reviewing themes,” (5) “defining and naming themes,” and (6) “producing the report.”

Primary codes (representing high-level themes) were mainly determined before analysis, based on indicators similar to indicators in the questionnaire (refer to app. 4) as well as others outlined in Wall and others (2017). Primary codes determined before the analysis consisted of “necessary perspectives are included in the partner group,” “sufficient and accessible opportunities to engage,” “the project team,” “engagement timing,” and “engagement barriers.” During analysis, several other primary codes were identified: “meaningful engagement activities,” “effective facilitation,” and “the right partners.” Secondary codes (representing specific themes within each primary code) were identified through an inductive, or bottom-up, process, which means the themes are strongly linked to the data. The full codebook and the number of times the secondary codes were mentioned are presented in appendix 6.

All practical steps outlined in this report are based on the analysis of interviews and survey data. The thematic analysis was designed to identify specific lessons learned on accomplishing or addressing each of the overarching themes or indicators of effective engagement outlined in the primary codes. Therefore, the practical steps described throughout the report mirror the codebook (app. 6). This approach identified specific steps to directly address engagement principles and indicators at a level of detail that is often missing in existing literature. Throughout the report, findings from this analysis are presented in several ways to inform the audience of this report most effectively: descriptions of themes and exemplary quotations that illustrate a practical step, syntheses of how these themes relate to each other, and discussions about how themes exemplify existing guidance and engagement research. Because some of our themes mirror engagement principles and indicators described in existing literature, discussion of relevant literature is incorporated where appropriate. Therefore, our report combines the results and discussion sections into one cohesive set of practical guidance. For example, the need to thoughtfully consider who the end-users of a scientific tool are and how end-users will use the tool was a key theme. This finding’s description includes an example of an exercise to accomplish this step, which is commonly used in the technology sector and described in existing guidance. Similarly, we identified a multitude of themes that reflect the human-centered design approach, so we describe this approach and offer several resources to learn more about it. In addition, exemplary quotations are provided throughout the report as a mechanism to directly represent the lived experiences of interviewees and more intimately relay their advice to fellow scientists consulting this report. Quotations have been lightly edited for brevity and clarity.

Practical Guidance for Effective End-User and Expert Engagement

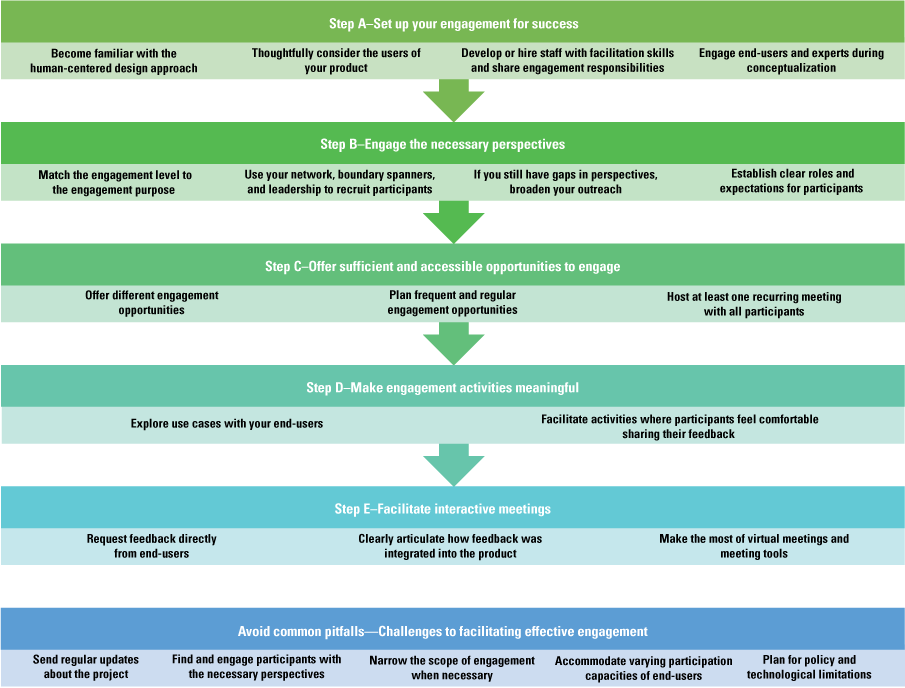

The following guidance is organized in chronological order, from “Step A—Set Up Your Engagement for Success” to “Step E—Facilitate Interactive Meetings.” We end with guidance to avoid common pitfalls that applies across steps A–E. Most steps are important to all three types of scientific tools: web tools, scientific protocols, and information products. Each step is relevant for all scientific tools, unless otherwise noted. A visual outline of these steps for effective engagement is presented in figure 1.

Flow chart of practical steps for effective end-user and expert engagement for scientific tools. This figure serves as a visual outline of the guidance provided in this report and mirrors the subsections of the report and the codebook used for the report’s thematic analysis of the interview data.

Step A—Set Up Your Engagement for Success

This section describes methods and approaches to set up engagement efforts for success.

Become Familiar with the Human-Centered Design Approach

The human-centered design approach is a structured method for developing tools, products, or experiences starting with a “deep understanding of the intended user’s needs and point of view” (Bamzai-Dodson and others, 2021, p. 1032). This approach begins at the start of the project and continues iteratively throughout the project development by engaging with users multiple times as user feedback is incorporated and the product is modified. In the present study, successful engagement by project leads included engagement methods that mirrored the human-centered design approach, including the following themes: engaging end-users in the conceptualization of the product, engaging end-users regularly throughout the project, and thinking about the end-users of the product and their use cases.

For resources that provide guidance on the human-centered design approach, refer to the U.S. General Services Administration (2037), IDEO.org (2015), Interaction Design Foundation (2016), and Consortium for Public Education (2025).

Thoughtfully Consider the Users of Your Product

Multiple interviewees who participated in our study identified the lesson learned that successful engagement depends on understanding how your product will be used, and to learn from potential end-users and collaborators, you must first identify them. This lesson reflects similar guidance provided by Stoltz and others (2023), advising tool creators to define the target users of the tool and understand the decision context. In Stoltz and others (2023), interviews of decision-support tool creators revealed that within USGS, “unsuccessful DSTs [decision-support tools] are often built without considering who the target users of the tool will be” (p. 14). However, our interviewees noted that predicting every potential end-user of a tool can be challenging. Upon reflecting on more than 5 years of facilitating engagement in a web tool, one interviewee stated,

Broadening that idea of what our ideal user was would have helped us reach out to groups like [Federal agency name redacted for privacy] or county weed managers, and really thinking about what is the scale at which this tool operates effectively. And that’s hard to know from an early stage of development, who your true adopters are. (Interviewee 2)

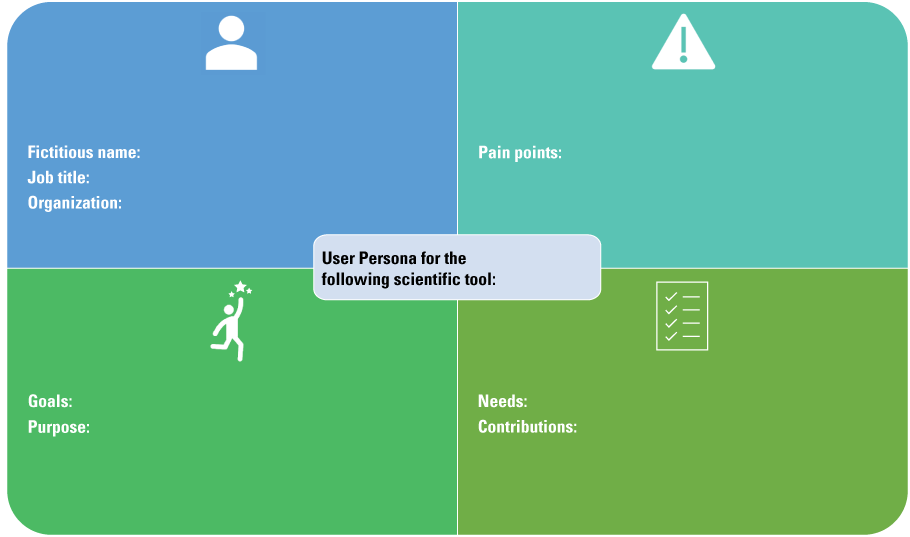

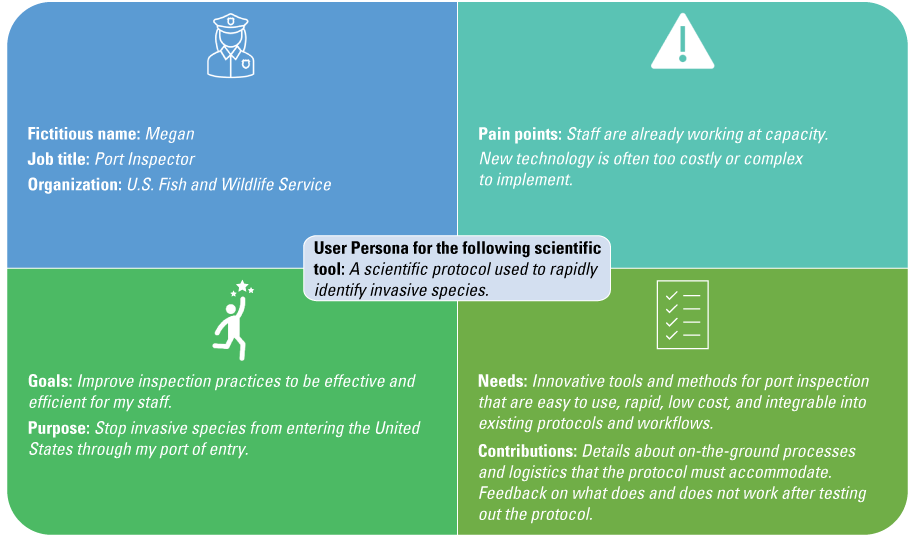

A helpful method from the design field for brainstorming potential end-users of your product involves creating user personas (Miaskiewicz and Kozar, 2011). User personas are fictional characters based on researching and understanding potential end-users of your product. This exercise guides your recruitment and conceptualization process by helping you and your project team think deeply about your end-users’ needs and experiences. One of the project teams included in the present study used a user persona exercise to inform the engagement and the design of a web tool. (A user persona template and an example for a project lead developing a scientific protocol are illustrated in figures 2 and 3.) This project’s lead noted that they only learned about end-users’ on-the-ground experiences after the end-users were recruited to participate in engagement activities. The end users’ on-the-ground experiences tested the project lead’s assumptions about user needs.

User personas are used to systematically recruit the necessary perspectives and plan what level and types of engagement are most appropriate for the various perspectives. This exercise can also be used to brainstorm potential experts to engage in the project by excluding the pain points portion of the exercise (fig. 2). Pain points refer to challenges end-users may face when using a scientific tool; since experts may not be end-users of the scientific tool, experts may not know the pain points.

A worksheet for project teams to brainstorm user personas of potential end-users of the scientific tool. “Pain points” refers to challenges potential end-users may face when using a scientific tool.

A worksheet example of a user persona for scientific protocol 1 (described in app. 2) illustrating the role, goals, challenges, and needs a potential end-user may have. Italicized text indicates examples of information a project lead would fill in. “Pain points” refers to challenges potential end-users may face when using a scientific tool.

Develop or Hire Staff with Facilitation Skills and Share Engagement Responsibilities

Some project teams noted that, as biologists or physical scientists, facilitation and engagement are not part of their expertise or how their performance is measured. However, trainings on negotiation, virtual meetings, webinar facilitation, and conflict management increased their team’s capacity to facilitate effective engagement. This lesson learned reflects the recommended best practices in the literature as well, such as the following indicator for effective engagement: “Research team has training or experience in collaborative research approaches” (Wall and others, 2017, p. 102). Similarly, Gerlak and others (2023) found that skillful facilitation supports effective communication and engagement processes. In other words, a particular aspect of engagement is how a project team facilitates interactions, such as group discussions during meetings. One project lead described the utility of facilitation training for biologists:

We’re biologists. We’re not particularly trained in that [meeting facilitation]…And so, I think early we were like, “Yes, engagement is good.” We didn’t really know how to do that…[facilitation training is] useful because there are going to be people. If you’re truly doing engagement, there are going to be people with opposing views…There are gonna be situations where you’re there to listen and hear the concerns, but then be able to take a breath and then still engage with that person and not run from it. (Interviewee 7)

Project teams noted that one team member is not enough to manage all engagement responsibilities, even for projects with small groups of participants. Furthermore, connecting participants with more of the project team increases knowledge exchange, relationship-building, and access to diverse expertise and perspectives. Therefore, project teams should consider sharing engagement responsibilities when possible and either hiring a team member who has expertise in engagement and facilitation or seeking training on engagement and facilitation. Sharing these responsibilities among a project team includes having a cofacilitator on virtual meetings to help monitor the chat, keep time, and facilitate virtual web tools. Sharing engagement responsibilities also includes sharing the responsibility of sending emails or newsletters, coordinating and scheduling interactions, and connecting the team with participants to build a network of knowledge exchange. One project lead described the importance of hiring staff specifically charged with engagement, because biologists are not always evaluated based on engagement:

Knowing my strengths and weaknesses and how I look at my time, it would have been very smart to actually fund someone [who] is specifically charged with doing this [engagement and partner communication]…This is so different because everything I get evaluated on—this is not one of them for the most part, right? So that’s one of the reasons why…it’s so easy to push to the side. (Interviewee 10)

Engage End-Users and Experts During Conceptualization

While external factors, such as rapid distribution of funding or accelerated timelines, can limit how early engagement begins, engaging end-users and experts as early as possible is a best practice highlighted throughout the literature on coproduction and product development (Ries, 2011; Wall and others, 2017; Bamzai-Dodson and others, 2021). Our interviewees emphasized that although it can be uncomfortable to engage potential end-users or experts before a draft product or concept for a product is formulated, there are benefits to doing so. One project lead characterized the necessity and discomfort that accompanied early engagement in a way that effectively reflected sentiments across interviewees:

I don’t have anything to show yet. And we don’t have answers to all the questions that [partners are] asking, and it’s really uncomfortable to go into a situation like that, not having answers. We want to be able to answer all the questions. We want to be able to say, “This is what we’re doing,” and have no fear, but it’s a really uncomfortable space to be. But I think embracing that discomfort, really, is playing out in a positive way in the long run…[the project team] got that engagement from the start and then we could honestly and truly tell people “Your engagement matters,” because we don’t have a plan yet…rather than coming to people afterwards and saying “We want your input, but everything is basically set in stone.” (Interviewee 7)

Creating or conceptualizing a product without first consulting the expected end-users inevitably means that project teams must make assumptions about end-user needs, day-to-day experiences, or context. Engaging potential end-users in the behind-the-scenes development demonstrates transparency and ensures that end-users’ perspectives are truly incorporated into the vision and function of the product. In contrast, requesting input after a draft or final product is created may mean there is minimal room to pivot and may appear disingenuous to the participant.

Project teams also emphasized the benefits of learning from experts early on in their project. These discussions could reveal existing resources and collaboration opportunities. The same project lead who emphasized how uncomfortable it can be to start engagement before you have all the answers reflected on an interview question about engagement timing:

Early engagement, because otherwise, you really are running the risk of duplicating efforts that are already out there. Yeah, you can do your market research and search the web and see what’s out there. But there’s actually so much that is in the works or only accessible to certain people, that if you’re not getting out there and talking to people you probably are recreating something…by doing that [talking to people], it also helped us to find our niche better, which is good for business…it doesn’t help anyone for us to be competing with other platforms…Working with those various platforms…now they’re some of our strongest partners. (Interviewee 7)

You almost wish that, in hindsight, having that opportunity to sit down and go over things in the hangar before we’re going down the runway—and getting them [partners] engaged in that at the very, very beginning of it…you may have ended up with something very, very different. But that [early engagement] didn’t happen, so, in hindsight, I wish I would have done that. (Interviewee 9)

In addition to engaging participants early, delivering drafts or versions of the work early rewards participants and keeps participants engaged with the project (Reed and others, 2014). Similarly, one of the lessons learned reported by Stoltz and others (2023) included working iteratively to develop a tool in response to feedback and results from usability tests. One project lead on a scientific protocol looked to strategies established in the private sector that similarly emphasized the need to engage end-users quickly, before there was a perfect product. In the technology sector, project development with frequent feedback is commonly called an agile development or a build-measure-learn process (sometimes referred to as a think-build-learn process; Ries, 2011). This project lead intentionally modeled the Ries’ (2011) build-measure-learn process and emphasized the value of an iterative approach:

You learn a lot by getting something out that isn’t perfect…[If you] build it [the product] as fast as you can and then get it out there and break it intentionally so you can then figure out how to make it better and learn from that…creating that build-measure-learn cycle. The faster that you can move through that cycle, the faster you get to a product or program that is more useful to your end-users. (Interviewee 10)

Step B—Engage the Necessary Perspectives

This section describes methods and approaches to engage the necessary perspectives.

Match the Engagement Level to the Engagement Purpose

Our findings reflect established best practices and guidance (Davidson, 1998; Bamzai-Dodson and others, 2021) for choosing the engagement levels and types of activities to facilitate for different end-users and experts. To thoughtfully recruit end-users and experts to engage in a project and establish clear roles and expectations, the project team should first determine what their engagement goals are. Different engagement activities accomplish different levels of involvement and fulfill different engagement purposes (Bamzai-Dodson and others, 2021). Table 3 presents an adaptation of a well-established framework useful for guiding what level of engagement (for example, inform, consult, collaborate, or coequal) is appropriate for a project and what engagement methods (for example, email lists, virtual group meetings, in-person workshops) are most conducive to facilitate that level of engagement. When coordinating with project leads on engagement plans, the first author adapted the Bamzai-Dodson and others (2021) table for congruency with scientific tool development instead of research projects. During the engagement planning process, the first author also incorporated feedback from project leads about points of confusion to inform table 3. For example, the name of the “Coequal” engagement category was originally “Empower”; however, this term clashed with realistic engagement possibilities of project teams and was changed to “Coequal,” which helped project teams more readily understand and apply this engagement level. Project leads used table 3 to brainstorm engagement strategies and design their engagement plans. Lessons learned from our interviews showed that some types of involvement (for example, “Coequal” table 3), may be more appropriate for collaborators who work on the project itself, whereas “Consult” (table 3) may be more appropriate for end-users who provide feedback based on their experience using the tool. The appropriate engagement level depends on the type of tool being created, the audience needed to contribute input, and the goals of the project team. For example, one project team focused on consulting end-users, but informing fellow experts.

[We have engaged] more of the science tech [technical] community…through traditional means, like our reviewed publications and presentations at scientific meetings where we focus more on the methodology. (Interviewee 1)

Table 3.

The engagement levels used by project leads as a guide for what activities and engagement levels to facilitate.[This table is adapted from Bamzai-Dodson and others (2021, table 1) and reflects guidance from coproduction and public participation research principles adapted for applying end-user and expert engagement in scientific tool development]

In contrast, another project team that produced an information product treated fellow experts as collaborators or coequals who were either involved in the project design or were coauthors of the final product. The experts could choose whether or not to participate.

Our other partners are all of the experts that we asked to participate, and it was an opt-in. There was no funding or anything toward them. They were helping us actually rate the risk based on the tool that we provided them and use their expert opinion. (Interviewee 3)

I was looking for folks that would be willing to work with us to do testing when it [the protocol] was ready, and to suggest different types of systems that were important. On the other end of the spectrum, coproduction and [collaboration], I wasn’t really looking for that either [because] I don’t think it made a lot of sense…I wasn’t expecting that partners would be coming into the lab alongside us to develop these sorts of things. (Interviewee 5)

In addition to asking project leads about their experiences recruiting and engaging participants, we also asked about what makes a good participant. We identified three characteristics that multiple project leads emphasized: (1) participants who provide honest, critical feedback; (2) are responsive and communicative; and (3) are tolerant of an iterative development process.

Every project has specific purposes, needs, audiences, and logistical considerations that determine the most appropriate engagement level(s) to pursue. Similar to how table 3 was used by project leads who participated in this study, table 4 can be used as a guide to consider what level(s) and type(s) of engagement are most appropriate for the identified users and experts and plan your engagement activities in tables 4 and 5.

Table 4.

A template for planning the first steps in engagement, describing the end-users and experts who could be recruited, the engagement level appropriate to each person, and recruitment strategies.[End-users and experts were recruited through a user persona exercise. Refer to figures 3 and 4 for the guidance in the user persona exercise that informed the “User and experts” column. The example of the port inspector is from figure 4]

Table 5.

A template for engaging end-users and experts in scientific tool development, with the engagement goals, activity types, frequency, follow-up actions, and types of end-users involved.[FWS, U.S. Fish and Wildlife Service; NPS, National Park Service]

Use Your Network, Boundary Spanners, and Leadership to Recruit Participants

Finding and keeping the necessary perspectives engaged was a common barrier emphasized by project leads. Overcoming this barrier is crucial for the tool’s success and building trust among participants. Representing user knowledge, needs, and priorities is similarly emphasized as a best practice for research project engagement (Reed and others, 2014). Once you are confident in your target audience(s), engagement purpose(s), and the engagement level(s) needed for each target audience, you can systematically recruit the necessary perspectives.

Your Existing Network

Social networks are an important resource for disseminating information, encouraging the adoption of new technology or tools, and recruiting collaborators (Lauber and others, 2011; Díaz-José and others, 2016). Existing contacts, whom you know, may be potential end-users or other people who may have technical expertise they can lend to your project. These contacts provide a starting point when recruiting individuals to participate in your engagement activities and provide meaningful input.

However, your existing network could be limited to the perspectives and experiences you are already familiar with. To engage all necessary perspectives, you might need to reach beyond this network.

Boundary Spanners and Leadership

Generate a list of the organizations, regional groups, meetings, conferences, and professional societies that are relevant to your product’s topic of interest. Contact leadership in the identified organizations, as well as individuals who work in the type of role that may use your product. In addition, work with leadership in your own organization to connect you with their contacts in other organizations. Although leaders or high-level managers may not be the end-users of your product, their buy-in and awareness are critical to ensure that their employees are empowered and encouraged to adopt your product.

In addition, consider who acts as a boundary spanner. A boundary spanner is a person who has the potential to link people, processes, and information among groups and organizations (Schwartz and others, 2021). For example, if your product will support research and policy related to reptile trade in the United States, the reptile and amphibian program coordinator for the Association of Fish and Wildlife Agencies may be an important boundary spanner to connect your work to State natural resource managers.

If You Still Have Gaps in Perspectives, Broaden Your Outreach

Inviting participants through existing networks may fall short of recruiting all the perspectives needed to inform your project. Similar to recruiting participants through boundary spanners, some project teams made new connections by attending events in person, where networking is often a goal of attendees. Presenting at events accomplishes several goals, including spreading awareness about the project and keeping potential end-users and other stakeholders informed. In addition, project teams may present at or host multiple events in which the audience may or may not return to participate in other engagement activities. During these events, a project team may invite audience members to sign up for future communication or engagement with a sign-up sheet or subscription link.

Our team did a ton of outreach at conferences, at meetings, at various virtual events and things like that, and out of that [outreach] we would just get a lot of people contacting us directly being like, “Hey, I want to learn more,” or, “Hey, I want to get involved,” or, “I’ve got these ideas,” or, “Would you come present to my group.” (Interviewee 7)

Those experts we identify through personal relationships, through societal participation, through publication knowledge, and then at one point we even looked through just university departments, like entomological departments or other groups, and we sent this invitation. So, a lot of them [the experts] were kind of a cold call, to be honest. (Interviewee 3)

Table 4 is a template for planning or brainstorming recruitment efforts based on the user personas outlined using figure 2.

Establish Clear Roles and Expectations for Participants

Establishing clear roles and expectations for the project teams and participants ensures effective engagement.

Identify and Meet Participants’ Expectations

A key lesson learned from our study is that by establishing clear roles and expectations, the project team can follow an agreed-upon frequency and type of communication with participants and be confident that these expectations are fulfilled. This lesson prevents participants from wondering when the project team will update participants on the project status or assuming that the project continued without their participation and feedback. Without clearly communicating what frequency or form of engagement to expect, you are more likely to fail to meet participants’ expectations.

Establishing early on what the expectation is for communication [is vital]…are they [the partner] looking for quarterly updates, annual updates, or just check-ins when you have something cool to share…having that conversation early on [about] how frequently folks want to be engaged and how they want to be engaged. (Interviewee 10)

Establish Expectations for Participants and Their Time

Participant roles can vary, from staying informed to providing feedback, contributing to the work, or being a coequal project team member. For example, a coequal project team member may be the coauthor on an information product or a staff member of a partnering organization developing one or more components of the final product. When recruiting participants, lessons learned from our study demonstrate that it is helpful, if possible, to provide and clearly articulate multiple participation options, which individuals can choose one or more of. For example, individuals may choose to stay informed by subscribing to an email list or to consult by participating in a group that meets quarterly to provide feedback on new versions of the product. When the project team is collaborating with an individual or group, the team should communicate expectations for time commitment, activities, and workload these collaborators will be responsible for. The team can communicate these expectations through an initial one-on-one or group meeting.

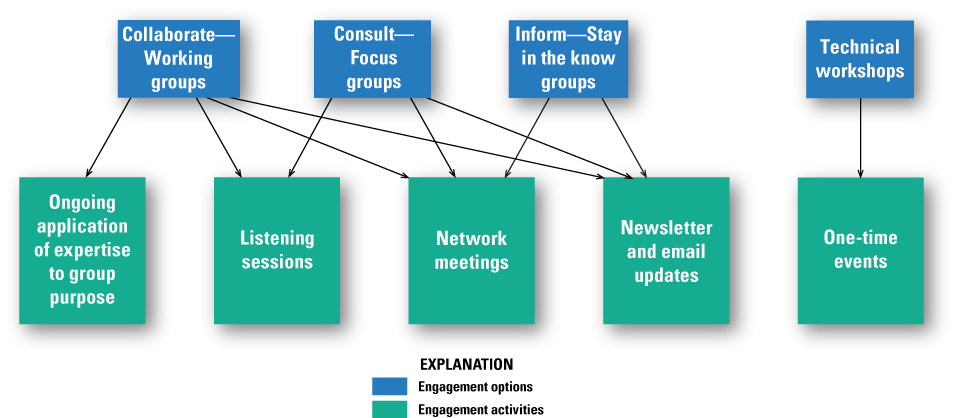

An effective method for recruitment and establishing clear roles and expectations was demonstrated by one project team. That project team created communication materials about specific engagement activities that varied in the time commitment expected and the purpose of the activity. The diagram shown in figure 4 illustrates the options participants could choose from. The project team initiated recruitment by sending an invitation to a one-time virtual kickoff meeting to various organizational leaders’ and boundary-spanning organizations’ email lists. During the kickoff meeting, the project team recruited participants by inviting participants to select which engagement options (discussed in fig. 4) the participants would like to be included in. For example, the participants could choose to (1) stay informed by choosing the “In the Know” group, (2) be consulted by signing up to join “Focus Groups,” or (3) collaborate by joining “Working Groups.” Participants subscribed to an email list for the chosen activity (or activities) and received subsequent announcements for meetings. The project team then shared responsibilities in facilitating the various activities.

A conceptual diagram example presented to potential end-users by a web tool project team to illustrate the participants’ engagement options.

Step C—Offer Sufficient and Accessible Opportunities to Engage

This section describes methods and approaches to offer participants sufficient and accessible engagement opportunities.

Offer Different Engagement Opportunities

People vary in participation capacity as well as comfort and communication preferences. By offering different engagement opportunities, a project can accommodate diverse personalities and preferences and is more likely to hear from the perspectives that are necessary to inform the project. Engagement opportunities may include a mixture of virtual group meetings, emails, and in-person events, and varied avenues for providing input, especially in virtual group meetings. For example, when facilitating a group meeting, project teams can encourage participants to answer discussion questions by raising their hand, unmuting, posting their ideas in the chat, or brainstorming as a group anonymously on a virtual whiteboard application or in smaller breakout rooms. This encouragement ensures that participants who are less comfortable speaking up in front of the group have other options for sharing their feedback.

We had different types of roles because not everybody has a ton of time to commit…They [potential participants] might be interested, [but] they’ve got a million other things pulling them in different directions. So, we wanted to have an opportunity for people who did want to be more engaged, and an opportunity for people who could pop in and out when they had time, and an opportunity for people to just…stay in the know. That was very intentional, with having those different levels of involvement. (Interviewee 7)

One project team, working collaboratively with fellow experts to develop an information product, found that creating a collection of materials that participants can access at any time was a helpful resource for collaboration. This collection included an overview of the project, detailed written and video-recorded instructions, and related literature. Participants, especially collaborators or coequals on the project, appreciated this level of organization and accessibility.

Two project teams (one developing a scientific protocol and the other developing an information product) emphasized the benefits of customizing communication to participants’ preferences and needs when possible. For example, some participants requested one-on-one meetings to discuss specific questions that these participants felt uncomfortable asking in a group setting. Others preferred that information and files be shared as attachments in an email:

Plan Frequent and Regular Engagement Opportunities

Participants preferred more interaction as opposed to less. In our evaluation of project teams’ engagement with participants, survey respondents were consistently more likely to perceive the frequency of any given type of interaction as too little as opposed to too much. In other words, some project teams were overly worried about burdening their participants and not concerned enough about scheduling frequent and regular interaction.

Avoid Lapses in Communication as the Project Team Waits for a Significant Milestone to Share

Several project teams reflected that when time passes without interacting with their participants, the project teams are indeed working to develop the product and plan to follow up with participants only when there is a new version ready, something substantial to share, or a milestone worth reporting on. Project teams were concerned about imposing on the participants’ time each time the project teams reached out, if there was no major news. However, during this lapse in communication, participants may wonder whether they are out of the loop, the product has been finalized without any additional input from them, or the feedback they have already provided has not been incorporated. Rather than waiting for a significant update before communicating with participants, project teams should schedule frequent check-ins, either through an email list, newsletter, or group meetings.

Avoid Feeling Like an Imposition

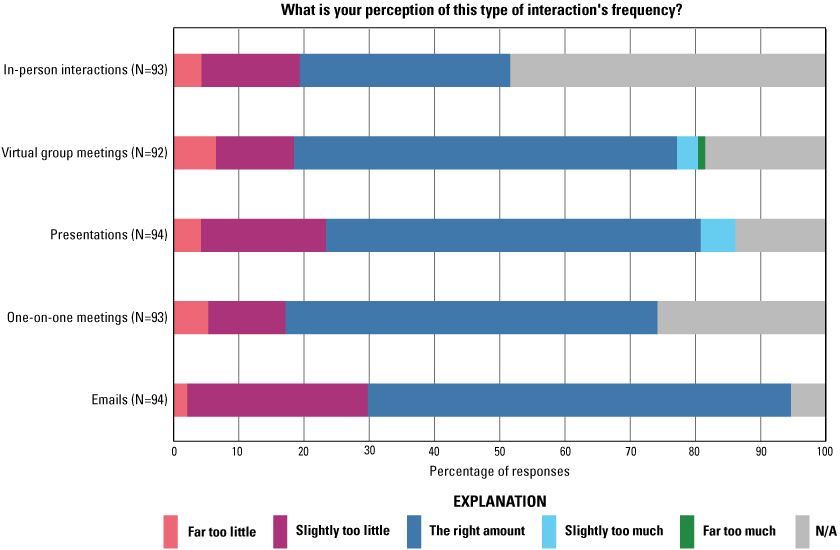

Project teams often worry about burdening or imposing on participants with too many emails, meetings, or presentations. However, on the survey measuring participants’ perceptions of interaction frequency, very few participants chose the responses for “Too much” or “Slightly too much” interaction frequency, but many chose “Too little” or “Slightly too little” interaction frequency (fig. 5).

A bar graph of survey results measuring participants’ perception of the frequency of various types of interaction. These participants were engaged in projects to develop U.S. Geological Survey scientific tools. Data from Clements and Wilkins (2025). N, number of responses; N/A, not applicable.

Before seeing the survey results from their participants, one project lead explained that they believed participants were overburdened with emails and meetings:

I don’t wanna bother the person. I know every single one of them is overworked. And here, I’m gonna reach out to them and say, “Hey, I want you to do more work.” (Interviewee 9)

I should just reach out to people…[this result] totally changes my perception on that [interaction frequency]. (Interviewee 9)

Engage, engage. Engage. Don’t hesitate…[Send participants] emails. Message them. Don’t worry…don’t try to be polite…[don’t think] “Oh, I don’t want to burden you.” Burden them…they’re good. Just do it. (Interviewee 9)

For the last year and a half, I have done much less with partner engagement. For multiple reasons, one of which is that we are in the midst of executing what we had talked about…so we can go back to this group and say, “Okay, we did what we said we were gonna do.” (Interviewee 10)

One project lead, who was worried about participants feeling that the project was an imposition, saw a regular newsletter as a solution for keeping participants regularly informed. A newsletter allows people to subscribe and unsubscribe without directly contacting the project team.

But we also don’t want to bother people…So, I like the newsletter…I don’t want to flood people’s inboxes who don’t want to receive those emails. But I think with the newsletter, where you control your own subscription, that’ll make it a lot easier to be like, “Hey, there’s going to be this presentation…so please join if you’re interested.” (Interviewee 1)

Host at Least One Recurring Meeting With All Participants

When engaging participants in different ways (such as one-on-one or small group meetings, which prevents awareness of other participants), it may be helpful to host at least one recurring virtual meeting two or more times a year with all participants. If you offer other meaningful opportunities for engagement, then two may be enough, but if virtual group meetings are your primary method of engagement, then three may be needed, as noted by one project lead upon reviewing survey results:

It’s really more about staying up-to-date with what’s going on and having regularly scheduled meetings that they [participants] know that they can look forward to updates. Like maybe three times a year. I think once a year is probably too little. I think twice a year is better. (Interviewee 2)

A virtual meeting, instead of an in-person meeting, ensures access across budgets, schedules, and a general capacity to travel. At virtual meetings, all participants receive the same information at one time and are sufficiently aware of project updates. An important aspect of end-users’ trust in the project team and trust in the quality of the final product is knowing that the necessary perspectives are engaged in the project. Recurring large group meetings provide a transparent mechanism to show the breadth of perspectives involved. Additionally, large group meetings can be an opportunity for participants to hear the perspectives of others who may have diverging views on the final product and explore mutually agreeable solutions.

I think the challenge really is that you have all these individuals with their own perspectives and their own thoughts on [a topic] that sometimes the information you get contradicts [information from other individuals], so you’re really left in a “Where do I go from here?” [quandary]. (Interviewee 8)

In addition, reflect on how engagement varies across groups. If you invest a lot of time and energy into outreach and engagement, such as presenting at conferences and facilitating meetings, consider how frequently or consistently you interact with the same individuals or groups. Project teams sometimes feel as though a lot was invested into engagement activities, but then receive feedback that engagement has been inconsistent, because those activities were not all with the same group of people, or the same people were not able to attend all interactions. Consistent interaction with the same participants ensures that participants feel in the loop and have opportunities to provide feedback at the right times in the project.

Step D—Make Engagement Activities Meaningful

This section describes methods and approaches to make engagement activities meaningful.

Explore Use Cases with Your End-Users

Scientific protocol and web tools can benefit from this method. One of the most meaningful forms of engagement for scientific protocol and web tools, noted by multiple project teams, is working one-on-one with end-users to understand the challenges in their work and how a product can overcome those challenges or improve efficacy and efficiency. Understanding use cases typically requires one-on-one or small group interactions in which you ask the end-user questions about how they currently perform their job functions and how your product may support their work. Additionally, once you have a product for the end-user to test, you can then ask how the product is used, why the tool is needed, what features of the product are most useful, and how the product could be improved. This information complements and confirms lessons learned reported by Stoltz and others (2023): defining metrics of success, analyzing tool use trends, and understanding user experiences are key steps for developing an empirical understanding of the use and usability for decision-support tools.

For web tools in particular, virtual interactions, as opposed to in-person interactions, can be used to understand use cases. Project teams creating web tools note the importance of learning how individual end-users use the tool, including uses that the project team had not considered. One project lead working on a web tool emphasized the benefits of individual narratives about how the tool is used:

I think the most meaningful [information] is really when folks are like, “Here’s how we’re using the tool,” because you’re not gonna know that until somebody tells you. (Interviewee 2)

If you are creating a scientific protocol or kit for use in a laboratory or in the field, in-person site visits (in which you demonstrate, train, or allow the end-user to test out the product) are a critical step toward creating a useful product. This form of engagement allows the end-user to learn how to apply the product to their work and encourages early adoption, while you learn firsthand what assumptions you have made about use cases and how the protocol needs to be revised. One project lead, developing a scientific protocol, emphasized that exploring use cases on site with end-users was critical to the product development:

In-person trainings—it’s been a lot. It’s taken a lot of time, but I don’t think…there’s a better way to go about it. Yeah, you could make a video or do something virtually. But it’s not the same…I’ve learned a lot myself going out on these site visits. I’ve learned how to better train on these, but also, things get brought up that I may not have thought of. Or I make assumptions about how they’re [end-users] going to use it [the product] or what places they’re going to sample and how well it’s going to work and find—Oh yeah, if we’re going to be on a boat, maybe we need to make this part a little easier—That kind of stuff, so almost logistical stuff…I don’t think there’s been a time when I’ve gone out that I haven’t gone back with a slight tweaking of some aspect of how I’m thinking. (Interviewee 5)

Facilitate Activities Where Participants Feel Comfortable Sharing Their Feedback

Trust is a requisite for knowledge exchange. Meadow and others (2015, p. 187) describe convening as one approach to trust-building: “the process of bringing parties together for face-to-face contact; this [process] forms the foundation for relationships of trust and mutual respect.” In the present study, project leads noted that participants vary in their preferences and willingness to speak up, especially in larger groups or among peers. One project lead discussed the interaction types in their participant group:

I think because it’s such a diverse group and people don’t know each other, there’s probably hesitancy to speak up. So, a lot of the one-on-ones were probably the most meaningful [interaction]. (Interviewee 3)

Activities can build trust among participants, increase participation, and generate more diverse, comprehensive input. Activities identified by project teams that nurture comfort among the individuals in participant groups include virtual web tools, small group discussions, and in-person, informal interactions. You may also customize how you communicate with individual participants based on their requests. Making participants comfortable encourages participation and critical feedback:

What really sticks with me for the in-person workshops and for actual trust building—it’s more the time you spend outside of the meeting [that is important]. What was really critical for the success of that meeting was folks being able to go out to dinner, go on runs together, go on walks…And it was, more, building social networks that then allow folks to be a little bit more open, less guarded, when you’re actually doing the workshop activities…the [brainstorming] activity was fantastic, but one of the reasons why it was fantastic is because folks had spent a day and a half together in an awesome place, getting to know each other first, and then they were able to just throw out random ideas and care less whether it was a good idea, a bad idea, because they had trust with one another. (Interviewee 10)

Some of the ones that stand out in my mind are when people totally just shoot down our ideas…because I think that’s honest. I think it takes a level of comfort for somebody to feel like, “Yeah, I’m not just going to go along with this.” So, I think when that happens, it’s like, “Oh, we’re connecting right now”…like [when people say] “Sure, but I can already do it this other way.” Cool. Thank you. Don’t waste my time, and I won’t waste yours. (Interviewee 7)

Step E—Facilitate Interactive Meetings

This section describes methods and approaches to facilitate interactive meetings.

Request Feedback Directly from End-Users

A common practice in the scientific field is to invest heavily in the “Inform” engagement level (in other words, one-way direction of communication; Beier and others, 2017). Types of interactions that accomplish this engagement level often include presentations about, plans for, updates on, or results of scientific work, followed by time for questions. However, this format does not foster two-way interaction and limits meaningful knowledge exchange among end-users and project leads. It can also create a power imbalance between those doing the science and those using the science by leaving little room for those using the science to have a voice in the project’s purpose, design, content, or final product. Though “Inform” might be the appropriate engagement level in some situations, developing scientific tools to empower the end-users requires a two-way interaction directly with end-users. One project lead emphasized that direct interaction with end-users ensures that end-users’ needs are met and end-users continue to be involved:

That would be my advice, that it’s that really direct engagement and back and forth, particularly if the partner’s having issues or they need some additional [equipment] or something. Just trying to be really responsive to that and keep their momentum going, because if they [project leads] kind of stop and there’s a big gap, [partners are] probably going to lose interest. You probably lost that partner. (Interviewee 5)

Project leads in this study recognized that there is more to engagement than just sharing information or marketing your project. Interactions must be facilitated in a way that fosters the exchange of ideas and knowledge and generates input directly from the end-user on the final product. One project lead reflected that encouraging feedback requires more than just presentations and answering questions:

A lot of our meetings started out with a little bit more presentation style and Q&A [questions and answers]. And, you know, Q&A seemed like engagement. But it’s also not, because you’re going to hear the same voices over and over, which, what they have to say is important, but it’s not the only voice and opinion that’s out there. And we wanted to find ways that could get the whole meeting engaged with those people who aren’t going to raise their hands in a meeting and speak up. (Interviewee 7)

Project teams requested feedback directly from end-users through various interactions, exercises, and virtual meeting tools. Complete lists of these activities are in appendixes 1, 2, and 3. The following are several examples of these activities:

-

• One project team developing a scientific tool facilitated a meaningful in-person activity (though this activity can also be facilitated virtually using virtual whiteboards) called the “MoSCoW” (must-have, should-have, could-have, and won’t-have) exercise (Vijayakumar and others, 2024). In the technological sector, MoSCoW is a well-established exercise that asks end-users to brainstorm what a product must have, should have, could have, and won’t have. Notably, the project lead emphasized that part of why the exercise was so successful was that before the activity began, the participants spent several days in informal sessions together in person, including sharing meals or visiting local attractions. The feedback generated through this exercise from end-users ultimately informed the specifications and functionality of a piece of technology needed to perform the scientific protocol.

-

• Another project team developing an information protocol facilitated one-on-one meetings in which the project team guided the end-user through the drafted product and asked for specific feedback on the content, language, and presentation of the information.

-

• A project team developing a web tool facilitated meetings with end-users in which the project team presented new features and updates and then prompted participants with topics and questions for discussion using a virtual whiteboard that easily facilitated the exchange of ideas.

-

• Finally, two project leads working on the same information product struggled with generating feedback from participants during one-on-one meetings. Both project leads noted that preparing a set of questions targeted at what the project leads needed to know to inform their product, to guide these meetings, may have generated meaningful feedback.

Clearly Articulate How Feedback Was Integrated into the Product

Our study found that communicating to participants how their input was used or considered to design the product is critical to the success of project engagement and, ultimately, product success. Be intentional about showing that you heard the participant’s feedback and how you have incorporated that feedback into the product, or why you chose not to. This guidance is an essential step to ensure that participants trust the team, feel heard, and are invested in a tool that they themselves helped design to be useful to their work. This guidance can be done first by sending follow-up emails after meetings summarizing the feedback you received, and second by presenting (in the following meeting) the specific additions and revisions the team made to the web tool based on each piece of feedback. Making participants feel heard and showing how feedback is incorporated may take time, but creates a feedback loop that end-users learn to trust and stay engaged in.

I’m always an advocate of follow-up emails after meetings, letting folks know what our big takeaways were from what folks had to contribute…I think it’s a good way to connect with folks and let them know that they’re being heard. (Interviewee 2)

They got to actually see an advanced prototype of the [tool] that they had given guidance on the previous year, so they actually got to see it and try to operate it. So, that was really cool to see, because they felt some ownership of it, since they had provided some of the design specifications…Because they were involved in the creation of this [tool], when they did see it about 6 or 7 months later, their excitement and support and enthusiasm [were apparent], because it’s cool tech, but also because they felt that they contributed to it. So that’s been—I think—one of the highlights. (Interviewee 10)

Make the Most of Virtual Meetings and Meeting Tools

Web tools and information products can benefit from this method. Our interviewees noted that facilitating group interaction and using virtual meeting tools can make virtual meetings as meaningful as possible. Although project teams included in our study often found in-person interactions to be the most meaningful, virtual engagement was also an opportunity to engage many people across a large geographic area without the costs associated with in-person meetings and events. Furthermore, many tools and techniques can increase engagement and interaction during virtual meetings. Using virtual meetings to your advantage is especially relevant for web tools and information products but can be applied to scientific protocols and group discussions. Scientific protocols are more reliant on in-person interaction to test the protocols in the field, whereas information products and web tools can easily be accessed, tested, and discussed through virtual interactions.

Virtual interactions can include perspectives from people who otherwise would not have the capacity to travel for in-person meetings:

With COVID [coronavirus disease 2019], we shifted to more of a virtual way of doing things, and that seems to have worked out because it allows us to have more people from all across the country engaged than if we did something where people had to travel. (Interviewee 6)

Given the national extent of the data coverage, it’s harder to have an in-person thing that is inclusive of the disparate potential users. (Interviewee 2)

I always prefer in-person interactions…But I have been pleasantly surprised with some of the changes that we’ve made and how we interact virtually and make it more…of a group effort rather than just a presentation…Since the nature of this work is spread across the whole nation, you can’t be in person everywhere, all the time. And I think that virtual engagement has opened up the opportunities [so] that when we are there in person, we can actually make the most of it in person. (Interviewee 7)

Virtual Whiteboard Applications

Our findings show that offering multiple ways for participants to share ideas increases the utility of virtual meetings. One project lead noted that encouraging several ways to share feedback within one virtual meeting increases participation, for example, by using a virtual whiteboard or website that allows participants to interact and collaborate, meeting chats, and verbal discussion:

We can have that piece, as well as the chat, as well as verbal discussion. So, we can get feedback, kind of, from those three different pieces. And I feel like we end up getting valuable pieces of information from all three. (Interviewee 1)

[This virtual whiteboard software] is most recently what we landed on that helped. That was the one that checked a lot of those boxes, and it’s cool because people can write out an idea. And then, other people can comment on that idea and thumbs-up it if they agree with it, or [say] like, “Yep, me too.” And so, it has this built-in discussion ability. (Interviewee 7)

We tried [a meeting polling application], but it was less flexible, even in the number of questions you could post. It’s nice with [the application], ’cause we could have different options that people could upvote or downvote, but then they can also add their own suggestions. (Interviewee 1)

Project leads perceived that virtual whiteboard software was the most useful because of the following features:

-

• The participant could access the whiteboard easily without needing to create an account.

-

• The participant could add to the whiteboard anonymously, which encouraged honest feedback and input from quiet participants.

-

• The whiteboard was designed in a way that generated interaction among participants, not just answers to questions. For example, the project team could start with several written prompts, which participants could respond to, but participants could also create their own prompts, respond to each other’s comments, and react to posts on the whiteboard with a thumbs-up.

Virtual whiteboards enabled meaningful engagement that made participants comfortable sharing ideas more openly, encouraged interaction among participants, and promoted knowledge exchange. Table 5 can be used to plan engagement activities to meet your goals, including the types and frequency of activities, follow-up actions, and the perspectives you seek to involve. Table 5 is adapted from a similar table that the project teams used in the present study to plan their own engagement.

Avoid Common Pitfalls—Challenges to Facilitating Effective Engagement

This section describes challenges and how to avoid common pitfalls when engaging end-users and experts. This guidance can apply to any step of the engagement process.

Send Regular Updates About the Project

Project teams often worry about imposing on participants’ time with meetings or email updates. However, based on survey results, it is far more common for a participant to perceive a type of interaction as not frequent enough as opposed to too frequent. “I don’t wanna bother the person. I know every single one of them is overworked.” (Interviewee 9)

Find and Engage Participants with the Necessary Perspectives