Design and Function of the Autonomous Benthic Imaging and Surveying System (ABISS) for Remote Sensing of Lake and Seabed Environments

Links

- Document: Report (3.2 MB pdf) , HTML , XML

- NGMDB Index Page: National Geologic Map Database Index Page (html)

- Download citation as: RIS | Dublin Core

Acknowledgments

The authors are indebted to the discussions, insights, and experiences offered by Nicholas Goumas, Matthew Johnson-Roberson, Mike Ferreira, Lorri Liggett, and Tyrell Thompson. Important input was also provided by Brian White and Richard Chase of the Michigan Tech Research Institute. This work was supported by appropriated funding to the U.S. Geological Survey under the Great Lakes Fisheries Research Authorization Act of 2019 (16 U.S.C. 931 et seq.).

Abstract

Lake and seabed environments are home to fisheries and other biota that are important to ecosystems and economies, yet these environments and the species that use them are difficult to accurately assess and monitor. Traditional benthic survey techniques, like bottom trawling used by the U.S. Geological Survey, are limited by substrate constraints, poor spatial resolution and precision, and operational depth limits, hindering accurate assessment of benthic species and habitats. In response to these limitations, the U.S. Geological Survey developed the Autonomous Benthic Imaging and Surveying System, a camera system integrated into underwater vehicles, to capture high-resolution images of the lakebed. The system uses color and stereo cameras to collect imagery, which can be analyzed using computational methods to detect organisms and (or) characterize habitat features, such as geologic substrate types. The system has been integrated into autonomous underwater vehicles and into an underwater housing used by self-contained underwater breathing apparatus (SCUBA) divers. Although the engineering of the system was motivated by the need for data collection in the Great Lakes, it has potential to collect high quality data in any aqueous setting with sufficient water clarity and safe operating conditions. The Autonomous Benthic Imaging and Surveying System can operate across diverse depths and light conditions to map and quantify ecological patterns that were difficult or impossible to assess using traditional methods. The Autonomous Benthic Imaging and Surveying System offers the potential for more accurate and precise monitoring and assessment of native benthic biota, invasive species, and habitat, potentially providing natural resource managers with improved information to support decision making about benthic resource management.

Plain Language Summary

Lake and seabed environments are home to fisheries and other biota that are important to ecosystems and economies. To conserve and manage these environments, it is important to be able to document and map them. This report presents a new technology that allows scientists to accurately study lake and ocean environments. Specifically, we report on the design and function of a robotic imaging system called the Autonomous Benthic Imaging and Surveying System (ABISS), of which two versions have been created. The ABISS systems have been used to capture high-resolution images and data from the Great Lakes, informing scientific research and discovery there. The report points to the similarities and differences between the two versions of the system and describes their potential uses.

Introduction

The use of robotics in large lake and ocean science has surged during the past decade with applications to fisheries assessment, lake and seabed mapping, water quality profiling, and other topics (Aguzzi and others, 2022; Xie and others, 2022; Constantinoiu and others, 2024; Handegard and others, 2024; Ussler and others, 2024). For instance, autonomous underwater vehicles (AUVs)—untethered robots that can be programmed to navigate specific tracks carrying science payloads—are produced by numerous manufacturers at a wide range of costs and capabilities. Camera payloads on AUVs can be used in scientific studies to map physical habitat, fish abundances, vegetation, coral reef communities, underwater archeological sites, and other features (for example, Diamanti and Ødegård [2024], Bonin-Font and others [2025], and Esselman and others [2025]). Autonomous imaging of benthic environments—in other words, those associated with the seafloor, lakebed, or riverbed—is a special area of practice that requires vehicles that can safely operate close to the bottom and gather images at sufficient frame rates, resolution, and exposure to support scientific objectives.

The benthic ecosystems of the Laurentian Great Lakes of North America are substantially similar to marine coastal benthic environments in their size and depth gradients and have compelling needs for benthic monitoring and assessment. The Great Lakes were invaded by benthic mussels (Dreissena rostriformis bugensis [quagga mussels]; and Dreissena polymorpha [zebra mussels]) and a benthic fish species (Neogobius melanostomus [round goby]) beginning in the late 1980s, leading to whole ecosystem shifts in limnological characteristics, food webs, and fisheries (Bunnell and others, 2021; Karatayev and Burlakova, 2025). Increases in water clarity caused by mussel filtering activity allow more sunlight to fall on the lakebed, which can lead to such abundant growth of native benthic algae biomass that it can foul beaches and promote the growth of botulinum bacteria that can concentrate in the food web and kill wildlife (Pérez-Fuentetaja and others, 2011; Chun and others, 2013). Round goby, which eat Dreissena spp. (quagga and zebra mussels), have become dominant prey for economically valuable predators including Salvelinus namaycush (lake trout), Coregonus clupeaformis (lake whitefish), Lota lota (burbot), Sander vitreus (walleye), and others (Pothoven and Madenjian, 2013; Roseman and others, 2014; Staton and others, 2014; Happel and others, 2018; Luo and others, 2019). Improved assessment of round goby is a major priority for regional fisheries managers. Similar to many marine and riverine settings, the high natural water clarity of the Great Lakes, which has improved since mussel invasion, creates conditions where underwater imaging can be very effective.

The ecosystem changes brought about by benthic invaders in the Great Lakes created a need for improved information about the distributions and abundances of lakebed biota that could be met by underwater robotic imaging systems. The small sizes of target species, such as quagga and zebra mussels with typical lengths of 10–15 millimeters [mm] or round goby with typical lengths of 40–180 mm, demand very high-resolution images to distinguish their edges and markings. Such sensing challenges are analogous to the detection of organisms like Placopecten magellanicus (the Atlantic sea scallop) in the northeastern marine benthic environment of the United States (Ferraro and others, 2017). Resolutions needed to sense abiotic features like geologic substrate are also very high. For instance, distinguishing sand from pebbles requires distinguishing features less than 2 mm on the long axis from those 4 mm and larger (Geisz and others, 2024).

In response to new challenges for benthic monitoring, the U.S. Geological Survey (USGS) engineered hardware and software systems to collect underwater images with submillimeter horizontal resolutions. Two camera systems with similar components were developed: a self-contained underwater breathing apparatus (SCUBA) diver operated camera system and one integrated into AUVs. The engineering objective of the effort was to develop integrated systems that could operate close enough to the lakebed to gather submillimeter resolution images useful for quantifying small-bodied organisms and substrates in imagery. This report describes hardware and software systems developed by the USGS since 2018 to image benthic environments.

The Autonomous Benthic Imaging and Surveying System (ABISS)

The USGS collaborated with the Michigan Tech Research Institute, Michigan Technological University and the Sexton Corporation (Salem, Oregon, U.S.A.) to design and build the Autonomous Benthic Imaging and Surveying System (ABISS). Since it was initially engineered in 2018 and 2019, the system underwent one major redesign in 2022, resulting in first- and second-generation systems referred to as ABISS1 (version 1) and ABISS2 (version 2), respectively. Both versions of the ABISS system were integrated into a SCUBA diver-operated housing (that is, “dive camera”) and an L3Harris Iver3 AUV. In the description of the sensors and integrations that follows, the now retired ABISS1 system is referenced in the past tense, and ABISS2 is referenced in present tense because its use continues at the time of writing (January 2026).

Both versions of the ABISS system were engineered with a common set of design criteria in mind. The goal was to develop an autonomous imaging system capable of surveying the lakebed with sufficient horizontal resolution to discern individual organisms as small as 10 mm in length across a range of depths and light levels and with sufficient image overlap to support photogrammetric reconstruction of three-dimensional (3D) structures. The previously mentioned target organisms (round goby, quagga and zebra mussels) inhabit water depths ranging from 0.5 meter (m) to approximately 200 m, which include areas in full darkness below the photic zone; therefore, the imaging systems had to be capable of imaging the lakebed in light conditions ranging from bright sunlight in clear, shallow waters to complete darkness in water as deep as 200 m. In addition to organism detection, a secondary goal was to describe 3D habitat structure and bottom type, including substrates and benthic algae. For this reason, the system was designed to produce real-time elevational point clouds from stereo image pairs and to collect images at frame rates sufficient to support photogrammetric reconstruction from overlapping monocular images (that is, structure from motion [SfM] techniques).

Both versions of ABISS used or use the open-source Robot Operating System (ROS; Open Robotics, 2016; Open Robotics, 2020) to combine data from multiple sensors. Because of the use of ROS, the ABISS camera control computers use the Ubuntu distribution of Linux (Ubuntu, 2020; Ubuntu, 2023) for their operating systems (OS). Each ABISS version also has or had custom written control software that combines the Python (Python Software Foundation, 2023) and the C++ (ISO, 2017) programming languages to start and stop data collection and modify camera operating parameters. In ROS, each sensor in the system (for instance, primary camera and stereo camera) has a node that publishes its data to a topic as a message. The topics are recorded in a ROS-specific format called a .bag file. Additional nodes are used to control the recording process and facilitate user interfaces. The files from each deployment of an ABISS system are grouped within collects—that is, discrete data collection events—and are assigned unique collect identifiers during the download process.

Autonomous Benthic Imaging and Surveying System 1 (ABISS1)

Hardware

The ABISS1 systems contained an 8.95-megapixel color primary camera (Allied Vision Manta G-895C with a 16 mm lens), and a stereo camera with paired 2.3-megapixel monochrome cameras (Basler ace acA1920-40um with 12 mm lenses) triggered by the Nerian SceneScan Pro stereo vision sensor (table 1). The Nerian SceneScan Pro (from here forward referred to as the “stereo vision sensor”) produced real-time disparity maps and point clouds from synchronized image pairs. The primary camera was set to record at 6 frames per second (fps), and the stereo camera recorded at 5 fps, which ensured sufficient overlap for high quality photogrammetric reconstructions. The primary and stereo cameras were originally intended to be synchronized by having them triggered by the stereo vision sensor, but the primary camera was not configured properly, resulting in the cameras being triggered independently and at different frame rates. All cameras were oriented with their right side to the front, except for the primary camera in the dive camera integration, which had its left side to the front. Lighting was provided by two SeaLife Sea Dragon 2500F lights, providing a total of 5,000 lumens. The camera control computer was an Intel NUC7i7DNKE. The AUV integration used a 4-terabyte solid-state drive (SSD) for its OS and data storage, and the dive camera used a 128-gigabyte SSD for its OS and swappable 256-gigabyte SSDs for data storage.

Table 1.

A comparison of system features used within the Autonomous Benthic Imaging and Surveying System 1 (ABISS1) and ABISS2 dive cameras and autonomous underwater vehicle implementations.[ABISS, Autonomous Benthic Imaging and Surveying System; AUV, autonomous underwater vehicle; in., inch; N=, number of units installed on system; SSD, solid-state drive; OS, operating system; GB, gigabyte; TB, terabyte; mm, millimeter; px, pixel; fps, frame per second; ROS, Robot Operating System; +, plus]

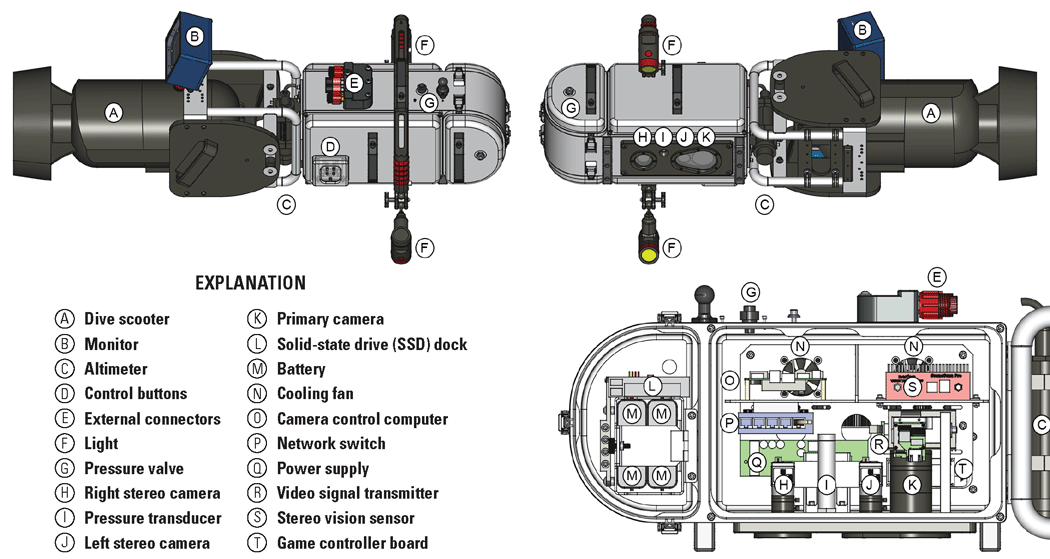

The dive camera contained additional hardware specific to its operations including an altimeter (Teledyne Benthos PSA-916) and a pressure transducer for depth estimation (Omega PX409-100GUSBH). Four custom made lithium-ion batteries (Sexton Corporation) were included to power the system via a M2-ATX power supply. Two removable sets of four batteries were created, one with a capacity of 6.6 amp-hours per battery and the other with a capacity of 7.8 amp-hours per battery. An SSD dock (ICY DOCK FlexiDOCK MB521SP-B) allowed for quick replacement of the 256-gigabyte, 2.5-inch SSDs used for data storage in the field. External buttons were installed on the starboard side of the camera housing, which could be operated underwater by gloved divers to power the system, start and stop data collection, adjust the camera gain, and reset the system in case of error. Button functionality was encoded using a generic Universal Serial Bus (USB) joystick game control board connected to the camera control computer. A custom waterproof monitor housing (Sexton Corporation) was created, allowing divers to use its 800 x 480-pixel, 5-inch screen (Adafruit High-Definition Multimedia Interface [HDMI] 5-inch Display Backpack-Without Touch) to view a live feed of their altitude and images gathered by the primary camera to check image quality in real time.

Integrations

Dive Camera

ABISS1 was first designed and built by the Sexton Corporation with input from the research teams at the USGS and Michigan Tech Research Institute to be operated as a stand-alone device for use by a single SCUBA diver (fig. 1). The dive camera housing was machined from solid blocks of aluminum and contained two sealed chambers with cable passthroughs (fig. 2). The forward chamber contained the batteries and SSD dock and was accessible from the port side by unlatching and removing an O-ring sealed panel. The aft chamber contained assorted electronics, cameras, and cooling fans, and was accessible on the port side by removing four bolts. Two flat camera ports of sapphire glass were installed on the imaging side (bottom) of the housing.

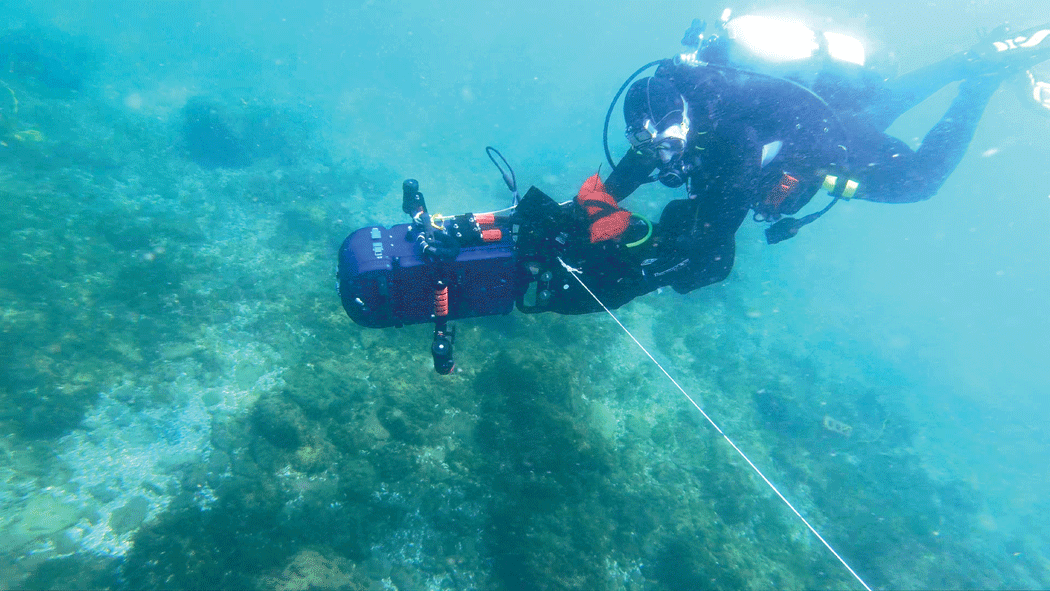

Photograph showing a U.S. Geological Survey self-contained underwater breathing apparatus (SCUBA) diver collecting data using the dive camera attached to a dive scooter. The dive camera was tethered to a 120-pound center pivot pole to control its flight path. Photograph by Peter Esselman, U.S. Geological Survey.

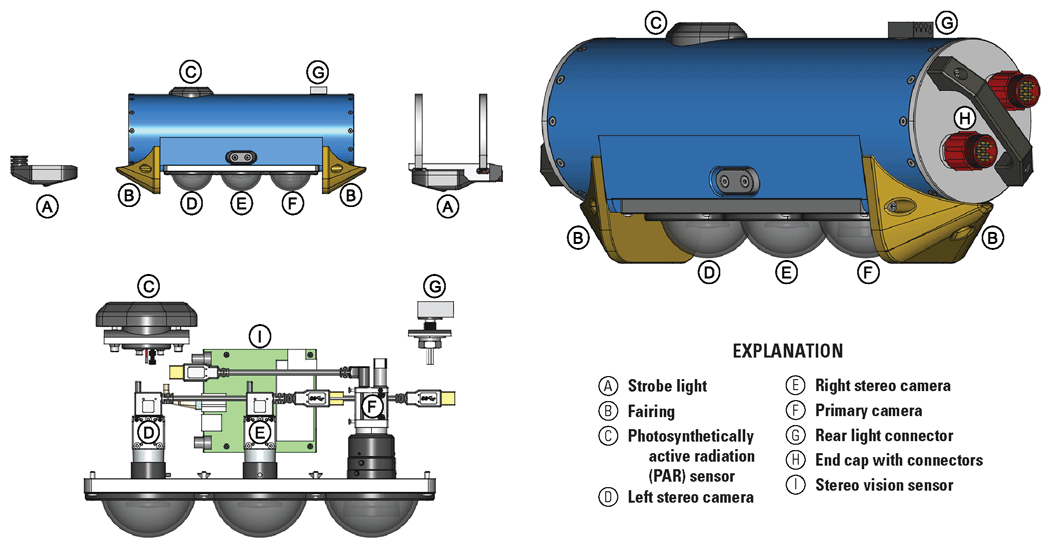

Technical drawings showing the Autonomous Benthic Imaging and Surveying System version 1 (ABISS1) dive camera with components labeled. The top left drawing shows the starboard side from above, the top right drawing shows the port side from below, and the bottom right drawing shows the internal components from the port side. Drawing by Alden Tilley, U.S. Geological Survey.

A waterproof monitor was connected externally using a custom cable with SubConn DIL13F connectors, connected to SubConn DBH13M connectors on the main camera and monitor housings, which provided power to the display and signal via an Ethernet connection. An HDMI extender (J-Tech Digital JTD-HDEX-1) was used to transmit and receive the HDMI signal via the Ethernet connection. An altimeter (Teledyne Benthos PSA-916) was mounted on the rear of the device and was connected externally via a serial connection through a custom cable with a SubConn DIL6F connector joined to a SubConn DBH6M connector on the outside of the housing body, which then internally led to a USB to serial adapter. An external Ethernet connection, which was capped while underwater, allowed for communication with the camera control computer using a SubConn DBH8M connector without opening the sealed enclosure. SeaLife Sea Dragon 2500F lights were attached to the sides of the dive camera and were controlled independently from the dive camera and powered from their internal rechargeable batteries. The dive camera was designed to attach to a dive scooter (Apollo AV-2 Evolution) to cover larger areas of lakebed more quickly (figs. 1 and 2; refer to “Data Collection Operations” section for how this was deployed to collect field data).

Autonomous Underwater Vehicle

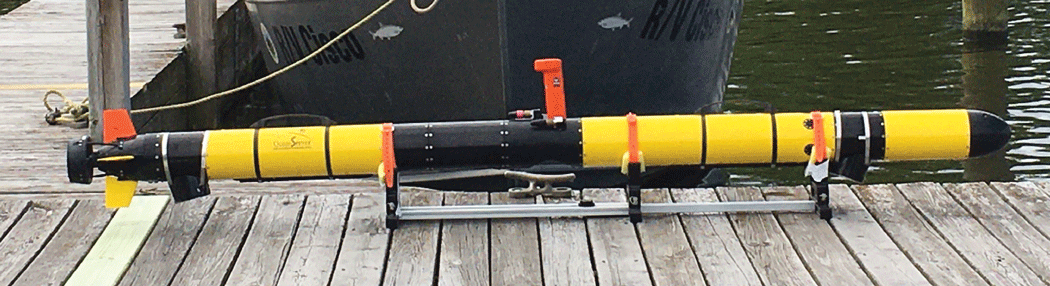

In 2019, the ABISS1 system was integrated with an L3Harris Iver3 EP16 AUV that is still in use at the time of writing (2026) with the ABISS2 system. The Iver3 AUV is a short-range AUV that was selected for the following characteristics: price point, reliability, ease of use, flexibility to accommodate science payloads, availability of a sophisticated inertial navigation system (INS), and a forward-looking object avoidance sounder to avoid objects near the lake and (or) seabed. The USGS Iver3 AUV is equipped with an Exail (formerly iXBlue) Phins Compact C3 INS, which provides real-time estimates of latitude and longitude upon submergence with a horizontal error less than 0.3 percent of distance traveled (that is, less than 3 m error per 1 kilometer traveled). The Phins Compact C3 INS allows the AUV to operate without the need for repeated surfacing to acquire Global Positing System (GPS) positions along the route, which greatly reduces the need for vigilance by a field crew. The mast of the vehicle contains an Iridium satellite antenna, and a Wide Area Augmentation System-enabled GPS antenna that is used as the reference location for the latitude and longitude metadata. Altitude and speed along bottom are recorded using a Doppler Velocity Log (Teledyne Pathfinder). To avoid objects in the water, the vehicle has a forward-looking object avoidance sounder (Imagenex 852) in the vehicle’s nose cone. The nose cone also contains a pressure transducer (Keller 20SX) to record depth and a digital compass (OceanServer OS5000). The AUV is controlled by the L3Harris proprietary control software (Underwater Vehicle Console), which runs on an onboard computer (referred to as the front seat computer) that controls the vehicle and logs its sensor data. When the AUV is at the surface, the front seat computer can be accessed from a laptop computer via a Wi-Fi connection to load, start, and stop missions.

Custom hull sections were machined by Russard Precision Machine (Rockland, Massachusetts, U.S.A.) to carry ABISS1 system components. The hull sections were machined from aluminum to the exact specifications of the AUV and are connected via double O-ring sealed joiner rings with 12 screws around the circumference of each joint. Two custom 6-inch-long sections containing the lights were installed in the front and back of the vehicle, and the custom camera sections were installed directly behind the mast. The primary camera and stereo camera were held in separate sections with the primary camera’s 5.5-inch-long section being aft of the stereo camera’s 9-inch-long section (fig. 3). The flat viewports for the lights and cameras were made of sapphire glass. The light sections were equipped with magnetic switches that could be triggered by swiping a magnet on the outside of the vehicle hull to turn internal components on and off. Both light sections had individual magnetic switches on their port side, and the camera control computer was turned on and off by a magnetic switch on the starboard side of the front light section. Improvised mirrored deflectors were attached using hose clamps to better focus the light into the cameras’ fields of view.

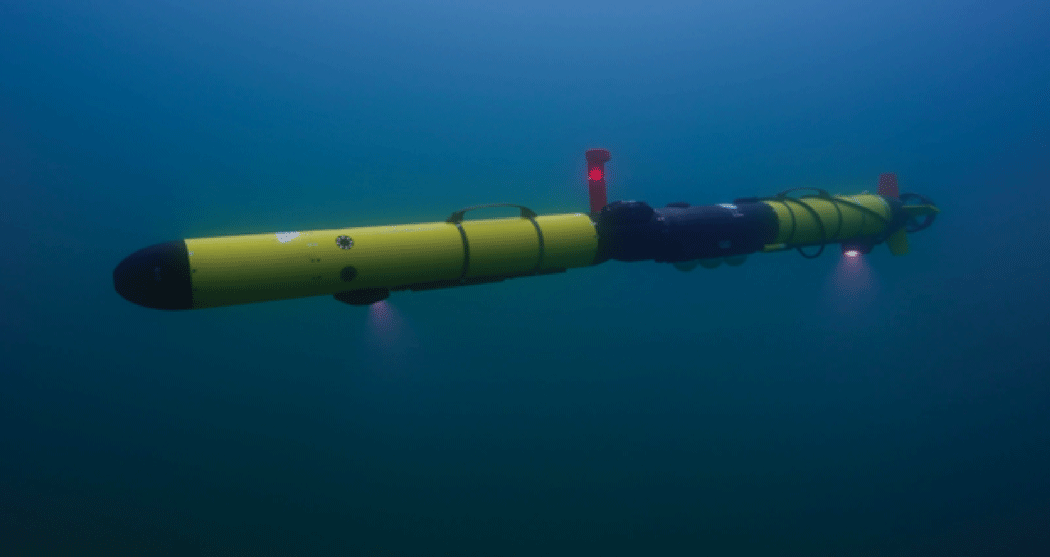

Photograph showing an Iver3 autonomous underwater vehicle with the Autonomous Benthic Imaging and Surveying System version 1 (ABISS1) installed. Custom sections are shown in black except for the nose, the tail, and the mast sections. Light reflectors are visible on the bottom of the two light sections, and the camera sections are positioned behind the mast (that is, to the left in this image). Photograph by Peter Esselman, U.S. Geological Survey.

The ABISS camera control computer (that is, the back seat computer), an Ethernet switch, and the stereo vision sensor were installed in the AUV’s electronics bay, the long section in front of the AUV mast. The Ethernet switch enabled a Telnet connection between the front and back seat computers, which allowed all variables recorded by the front seat computer to be included in the ABISS recordings. The data transferred via this connection included positional data (including latitude, longitude, depth, altitude, roll, pitch, and heading), which were used to georeference the images. Data were offloaded via an Ethernet connection near the mast using a Teledyne Impulse 12-pin connector that allows for download speeds close to 1 gigabit per second.

Software

ABISS1 systems used the Ubuntu 16.04.7 OS (Ubuntu, 2020) with ROS Kinetic Kame (Open Robotics, 2016). The data from all sensors were recorded using ROS and saved in the .bag file format. ROS was configured so that each sensor had a dedicated package, in which the nodes published messages to their corresponding topic. The primary camera used the avt_vimba_camera package (Massot, 2018), and the stereo camera used the nerian_stereo package developed by Nerian Vision Technologies (Schauwecker, 2018). The other ROS packages were custom written for this system. These included the bagger package, which started and stopped recording; the bringup package, which facilitated starting all other packages; and the telnet_ros package (AUV integration only), which used a Telnet connection to the front seat of the AUV to request data once per second and publish the responses. Each .bag file was set to store a maximum of 2,700 megabytes of data; once this file size was reached, a new .bag file was automatically started.

The dive camera integration of ABISS1 contained additional packages for its unique altimeter and pressure transducer. A custom altimeter package was written to read the altimeter output via a serial connection and publish the altitude 5 times per second. The ‘transducer’ package read the pressure transducer via a serial connection and published the depth each second. The fboverlay and ncdisplay packages controlled the dive camera’s display, providing the operator with a live view of the images, battery status, SSD storage status, and brightness. The joy package (Bohren and others, 2017) handled the output of the buttons on the camera, and the uibuttons package managed the functions of those buttons, including starting and stopping recording, changing exposure times, and changing the display view. The autocamera package was used to adjust the camera’s gain setting by enabling autogain for a short period of time to adapt to changes in ambient lighting. All the packages specific to the dive camera were custom written except for the joy package.

Controls and Operations

Dive Camera

The dive camera implementation of ABISS1 was controlled using the external buttons. The lights were manually turned on and off using their separate power buttons. Users could also connect to the camera control computer from a field laptop via the Secure Shell protocol to make changes to primary camera parameters, such as exposure, by changing parameters in the ROS launch file. Adjustments to the stereo camera, including calibration, were made by connecting to the stereo vision sensor’s configuration webpage.

The ABISS1 dive camera integration was designed to save the data to a 256-gigabyte SSD placed in an SSD dock next to the batteries in the front compartment for easy removal (fig. 2, “Explanation” item L). After data collection was completed, the SSD had to be physically removed from the system to copy its data to a larger storage device using a USB to serial attachment adapter. Once the copy was completed, the .bag files were manually sorted into folders by data collection event. When operating the dive camera, users installed the batteries and an SSD into the forward compartment before diving. Then, if the SSD needed to be replaced between dives, the full SSD would be swapped for an empty SSD. Battery capacity was sufficient and replacement was not required during a typical day of operation. The batteries were removed from the system for charging and for storage in a lithium-ion battery safe bag.

Autonomous Underwater Vehicle

The AUV integration of ABISS1 was operated via command line inputs delivered to the camera control computer over Wi-Fi from a field laptop via the Secure Shell protocol. Commands were used to launch or terminate the nodes as needed. If lights were needed, they were turned on manually using the magnet for the AUV. Adjustments to primary camera parameters, such as exposure and gain, were done through modifications to parameters in the ROS launch file. Adjustments to the stereo camera, including calibration, were made by connecting to the stereo vision sensor’s configuration webpage.

The .bag files saved on the AUV backseat computer were copied to portable hard drives using the rsync command on a laptop running the Linux operating system. After download was complete, .bag files were manually sorted into their respective collect folders. The AUV mission log folder(s) from the front seat computer were manually matched and copied to their respective collect folders later.

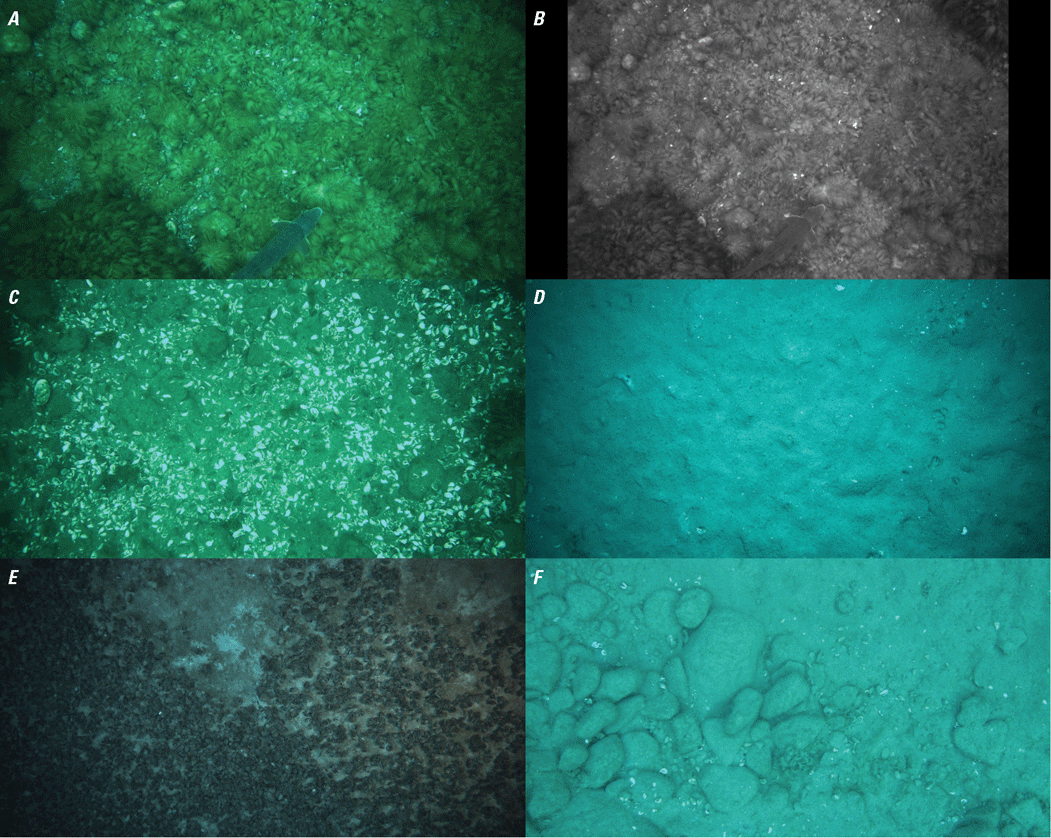

Issues with Autonomous Benthic Imaging and Surveying System 1

Although the ABISS1 system was successfully used to collect tens of thousands of interpretable images of Great Lakes benthic environments (fig. 4), it had shortcomings. The primary issues associated with ABISS1 related to image quality. The primary camera did not allow for auto exposure, so a constant exposure time had to be used across a wide range of ambient lighting conditions encountered during a typical mission. Additionally, the onboard lights were not sufficiently bright to acquire images in dark conditions, such as at night or in deep water, even with the reflectors. This resulted in a high prevalence of overexposed images in shallow areas during daytime and underexposed images in deep areas and at night. Poor image quality led to a need for extensive quality checking of images, and a high rate of rejection because of poor quality, even after extensive effort to determine optimal camera settings.

Figure showing raw images collected using the Autonomous Benthic Imaging and Surveying System version 1 (ABISS1). The top images are a matching (A) primary camera image and (B) stereo camera image. The bottom four images are assorted primary images showing (C) mixed gravel lakebed with dead Dreissena spp. (mussel) shells, (D) fine sediment, (E) live mussels covering fine sediment, and (F) mixed gravel substrate.

The software used with ABISS1 had flaws that inconvenienced data collection. For instance, the cameras on ABISS1 ran for the entire duration of a collect, resulting in the recording of many boat deck and water column images as the AUV was diving to the lakebed. This contributed further to high rates of unusable images and consumed valuable onboard data storage capacity. Finally, the primary camera and stereo camera were configured such that the primary camera would receive trigger signals from the stereo vision sensor, but the configuration did not function as intended, resulting in the cameras being unintentionally out of sync.

Autonomous Benthic Imaging and Surveying System 2 (ABISS2)

After using ABISS1 intensively in 2020 and 2021, the system was reengineered to (1) reduce the prevalence of unusable images from overexposure, underexposure, and high altitude; (2) improve the user interface; (3) increase camera resolution; (4) convert the stereo camera from monochrome to color; (5) add a photosynthetically active radiation (PAR) sensor to record photon flux; and (6) marginally reduce the physical length of the system. The PAR sensor was added to support estimation of growth-limiting factors for benthic plants and algae and is not related to the function of the camera and (or) strobes. The resulting ABISS2 system was integrated into two Iver3 AUVs and into the same dive housing previously described for ABISS1.

Hardware

ABISS2 (fig. 5) was upgraded to carry a 12.3-megapixel color primary camera (Basler ace acA4112-20uc) oriented with the bottom of the camera sensor facing the front of the vehicle (that is, the direction of travel). This camera orientation, rotated 90 degrees from the ABISS1 system, covers a wider swath width, but still allows sufficient image overlap to support photogrammetric reconstructions of the lakebed. ABISS2 also carries a pair of 3.1-megapixel color cameras for stereo imaging (Basler ace acA2040-55uc with 4 mm lenses) oriented with their left sides to the front of the vehicle. The primary camera triggers the stereo cameras and the strobe lights. Similar to ABISS1, the stereo vision sensor produces real-time stereo disparity maps and point clouds. The lens on the primary camera can be swapped by opening the ABISS2 housing, with a 16 mm lens (Edmund Optics 16 mm HP Series Lens, 1 inch) being used most often. All cameras are set to record at 2 fps, which is the maximum speed at which the strobe lights can achieve their full brightness, though this frame rate can be increased with some loss to strobe brightness.

Technical drawings showing the Autonomous Benthic Imaging and Surveying System version 2 (ABISS2) with components labeled. The top left drawing shows the port side of the camera section including lights. The top right drawing shows the port side of the camera section from the aft, and the bottom left drawing shows the internal components from the port side. The end caps shown in the top right drawing are only installed when the camera is being run independently from an autonomous underwater vehicle and are removed when connected to an autonomous underwater vehicle. Drawings by Alden Tilley, U.S. Geological Survey.

The AUV integration of ABISS2 (fig. 6) carries an up-facing LI-COR LI-192 Underwater Quantum Sensor, which measures PAR. The PAR sensor is connected to a LI-COR 2291 millivolt adapter from which the output is read using a Yocto-milliVolt-Rx USB millivolt sensor. Lighting for the AUV integration is provided by two Arctic Rays Remora strobe lights, providing as much as 30,000 lumens each (total of 60,000 lumens) in synchrony with the three cameras. The AUV integration of ABISS2 uses the same Telnet connection, camera control computer (Intel NUC7i7DNKE), and 4-terabyte SSD as ABISS1 (table 1).

Photograph showing an Iver3 autonomous underwater vehicle with Autonomous Benthic Imaging and Surveying System version 2 (ABISS2) installed collecting data underwater. Photograph by Inspired Planet Productions, used with permission.

The AUV hull section for ABISS2 can be operated when not installed on the AUV for bench-top testing, in-water calibrations, and lens focusing. The ABISS2 camera system has O-ring sealed end caps with waterproof connectors (SubConn DBH13M) for this purpose (fig. 5, “Explanation” item H). Thus far, this has been done with an Intel NUC13ANKi5, although other computers could be used with appropriate configuration.

The hardware on the dive camera integration of ABISS2 differs slightly from the ABISS2 AUV integration. Starting from the base configuration of the ABISS1 dive camera presented in figure 2, the ABISS2 dive camera was upgraded to carry the same higher resolution primary camera used in the ABISS2 AUV integration (Basler ace acA4112-20uc), an upgraded camera control computer (Intel NUC13ANKi5), and an inertial measurement unit (IMU; Adafruit BNO055 STEMMA QT connected via an Adafruit QT Py) that records the housing’s roll and pitch. All other components from the dive camera (that is, altimeter, pressure transducer, stereo vision sensor, stereo cameras, and so on) are identical to ABISS1 (table 1). A PAR sensor has not yet been added to the dive camera.

Integrations

Dive Camera

In 2025, the ABISS1 dive camera was upgraded to use the ABISS2 primary camera, updated to ROS Noetic Ninjemys, and an IMU was added. The camera control computer was also upgraded. In addition, 3D-printed mounts were used to adapt the positions of the new primary camera, the IMU, and the camera control computer in the dive camera housing. New trigger cables were also added to allow the primary camera to trigger the stereo camera.

Autonomous Underwater Vehicle

ABISS2’s cameras, stereo vision sensor, and PAR sensor were built into a custom 18-inch hull section designed for installation on the Iver3 AUV (fig. 5). The custom hull section was machined from a solid block of aluminum and anodized. The camera control computer and an Ethernet switch were installed in the AUV electronics bay forward of the mast, and the camera control computer is configured to turn on once it receives power from the AUV on startup. The front strobe light is attached via a 22-mm penetrator port into the electronics bay and is powered and controlled from wires routed internally through the hull. The rear strobe light is powered and controlled by a cable that runs externally to the AUV from a SubConn LPBH-4F connector on top of the camera section (fig. 5, “Explanation” item G), and is secured to the AUV hull with a hose clamp. The cameras have 3-inch acrylic dome viewports that are evenly spaced, and the domes are protected on their forward and aft sides by 3D printed fairings (fig. 5, “Explanation” item B) in case the AUV comes into contact with the lakebed; however, in practice, the fairings were removed because of weight constraints.

Software

ABISS2 uses the Ubuntu 20.04.6 OS (Ubuntu, 2023) with ROS Noetic Ninjemys (Open Robotics, 2020). The data from all the sensors are recorded using ROS and are saved in the .bag file format. ROS is configured so that each sensor has a dedicated package, in which the nodes publish messages to their corresponding topic. The primary camera uses the pylon-ros-camera package developed by Basler (Debout and others, 2022), and the stereo camera uses the nerian_stereo package developed by Nerian Vision Technologies (Schauwecker and Torky, 2023). Using the pylon-ros-camera package to control the cameras enables compatibility with a variety of other Basler cameras, such as the monochrome variant of ABISS2’s primary camera (Basler ace acA4112-20um). Like ABISS1, the other ROS packages were developed specifically for this system. The bagger package starts and stops recordings. The bringup package is used to configure the primary camera when it powers on and to start and stop the camera in the middle of a recording. The gui_server package manages a webpage-based user interface by running the server for the webpage and handling any interactions between the user and ROS, such as launching the nodes used for recording and viewing a live feed of the data. A cpu_temp package was added to record the internal temperature of the central processing unit (CPU) as a diagnostic tool to monitor for overheating, which has happened on hot summer days. The AUV integration has the telnet_ros package, which uses a Telnet connection to the front seat computer of the AUV to request data four times per second and publishes the responses. The AUV integration also uses the par_light_sensor package, which uses yoctolib (Yoctopuce Sarl, 2022) to read the Yocto-milliVolt-Rx USB millivolt sensor connected to the PAR sensor, and publishes the value at 1-second intervals.

The dive camera implementation of ABISS2 uses the same altimeter and transducer packages as ABISS1. The standard joy package, developed by (Bohren and others, 2021), was updated for ROS Noetic. The imu package is used for reading and publishing the IMU sensor data. The gui_display package is used to display the current roll, pitch, and altitude, and a live feed of the cameras. The uibuttons node was slightly modified from ABISS1 to change the functions of some buttons (refer to “Controls and Operations” section) and added keyboard equivalents for development purposes. The bagger, bringup, and gui_server packages were adjusted to match the capabilities of the dive camera, such as removing features related to the telnet_ros package.

Controls and Operations

ABISS2 abandoned the command line interface of ABISS1 in favor of a more user-friendly webpage graphical user interface. The webpage interface allows for adjustment of camera parameters, so users can view data coming from the front seat computer through the Telnet connection, the currently running ROS topics and nodes, a list of files recorded with previously recorded data deleted, and a variety of troubleshooting utilities. ABISS2’s control software allows users to control when the cameras should trigger during a recording, such as (1) within a bounded range of altitudes above the lakebed, (2) only below a maximum altitude, or (3) to always trigger the cameras (that is, the ABISS1 default). The different recording options allow for economization of camera data to altitudes that are within view of the lakebed, which reduces battery drain from continuously flashing the strobe lights and avoids storing data that are not useful for analysis. The primary camera used in ABISS2 allows for autoexposure, which changes exposure times based on the brightness of the scene to avoid overexposure or underexposure. The upper limit of exposure (that is, maximum exposure time) can be set to minimize motion blur while the AUV is moving. This has improved the overall rate of rejection of images collected by the system (fig. 7). ABISS2’s primary camera also allows for autogain, with upper limits being included to avoid excessive noise in the images. To minimize noise in the images, the primary camera’s autogain function is set to only increase gain above a lower gain limit when the upper exposure limit is reached. The result is that unless the ambient lighting is very bright, the images are normally at the upper limit of exposure after which only gain is adjusted to maintain brightness. Auto white balance was initially an option, but this was later removed in favor of color calibration.

Figure showing images taken using the Autonomous Benthic Imaging and Surveying System version 2 (ABISS2). The top images are a matching (A) primary camera image and (B) stereo camera image. The bottom four images are assorted primary images showing (C) Dreissena spp. (mussel) encrusted gravel, (D) mussel-covered fine sediment, (E) fine sediment with algae tufts, and (F) mixed gravel lakebed with dead mussel shells and attached algae over rocks.

The .bag files saved on the camera control computer are offloaded to external hard drives with a laptop in the field using a graphical user interface-based software utility written by the USGS specifically for this process. The utility allows users to copy the .bag files and AUV mission log folder(s) to a user-selected destination folder, with subfolder names being assigned automatically.

Issues with Autonomous Benthic Imaging and Surveying System 2

The replacement of flat sapphire camera ports in ABISS1 with acrylic domes in ABISS2 resulted in images that are mildly out of focus along the edges and in their centers. This modification was made to reduce distortion but came at the expense of images taken with a reduced depth of field. Reduced focus in the centers of images may be due to vertical misalignment of the lens within the domes. Additionally, the acrylic domes scratch easily and are expensive to replace. Conversion to sapphire flat ports is under consideration.

Data Collection Specifications

Dive Camera

The dive camera can be deployed in several ways to generate point and (or) transect data. One mode of operation has been to tether the dive camera to a 2-m high vertical post attached to a 120-pound cement base, and to run a spiral pattern with one SCUBA diver operating the camera attached to a diver scooter, and a second SCUBA diver drawing in the line after each 360-degree path is complete (fig. 1). This procedure results in a circular area of overlapping coverage that can be georeferenced to the pivot location from the boat’s GPS. A second mode of operation is to run linear transects with a known starting location. This mode of deployment makes it more difficult to georeference image locations as the dive camera moves away from its initial position, although simultaneous localization and mapping methods may be a viable approach to improve positional estimates in the future.

During operation of the dive camera, divers are responsible for managing the altitude, roll, and pitch of the camera to maintain a nadir camera orientation. When operating ABISS1, the divers could view their altitude, but there was no sensor for roll or pitch. ABISS2 has an onboard IMU for sensing roll and pitch, which are shown on the display to allow the divers to adjust the dive camera’s orientation with the objective of collecting nadir images. The dive scooter is used to control forward movement.

Autonomous Underwater Vehicle

Operations of the AUV for data collection with both versions of ABISS are guided by a specific set of mission parameters to optimize image quality and horizontal resolution to support detection of small features (that is, benthic fish and mussels) on the lake and (or) seabed. These parameters have been determined through trial and error during many field deployments and desired ground resolutions of images. In standard operations with ABISS, the AUV is programmed to move at a forward velocity of 1.5 knots (0.77 meter per second), which allows for sufficient exposure times with minimal motion blur. Higher speeds could hypothetically support collection of unblurred images by reducing the maximum exposure time when a high amount of ambient light is present. Speeds lower than 1.5 knots result in the AUV not maintaining its desired altitude nor horizontal orientation parallel to the lakebed, because the AUV is positively buoyant and requires forward motion to maintain its depth and orientation.

The AUV is typically operated at 1.75-m altitude to maximize resolution of objects and minimize collisions with the lakebed. Collisions with the lakebed increase when altitudes of 1.5 m are used (the minimum possible for the Iver3AUV). At 1.75 m over a flat surface, the ABISS1 primary camera achieved a ground resolution (that is, the dimension of one pixel in an image) of approximately 0.56 mm, and the ABISS2 primary camera achieved a ground resolution of 0.43 mm based on the scaling approach described by Esselman and others (2025).

It is essential that the AUV is ballasted and trimmed properly so that it flies level and with minimal roll to achieve as close to a nadir perspective as possible. The AUV is configured to have slightly positive buoyancy. As a result, the vehicle is constantly pitched slightly downward while moving underwater. If the vehicle is too buoyant, it has issues diving and has an increasing downward pitch during operations. Similarly, an improper trim of the vehicle results in changes to the roll of the vehicle and the camera. Proper ballast and trim ensure that the vehicle achieves optimal camera orientation relative to the lakebed, which leads to greater accuracy in estimates of object sizes (for details, refer to Esselman and others [2025]).

Mission planning for the AUV is done in the VectorMapSharp application developed by L3Harris Corporation. This application allows the user to program missions among specific geographic waypoints and control vehicle target speeds, depth or altitude, and other parameters. When planning missions, the best results have been achieved by traversing gradually down slopes, because traversing up steep slopes has resulted in bottom impacts. Because most missions for data collection with ABISS follow a similar structure, the USGS has developed a software utility to avoid unintentional deviations from a standard set of mission parameters and safety settings for planned missions. The same software utility can be used to automatically generate missions from a set of waypoints or from a single start point.

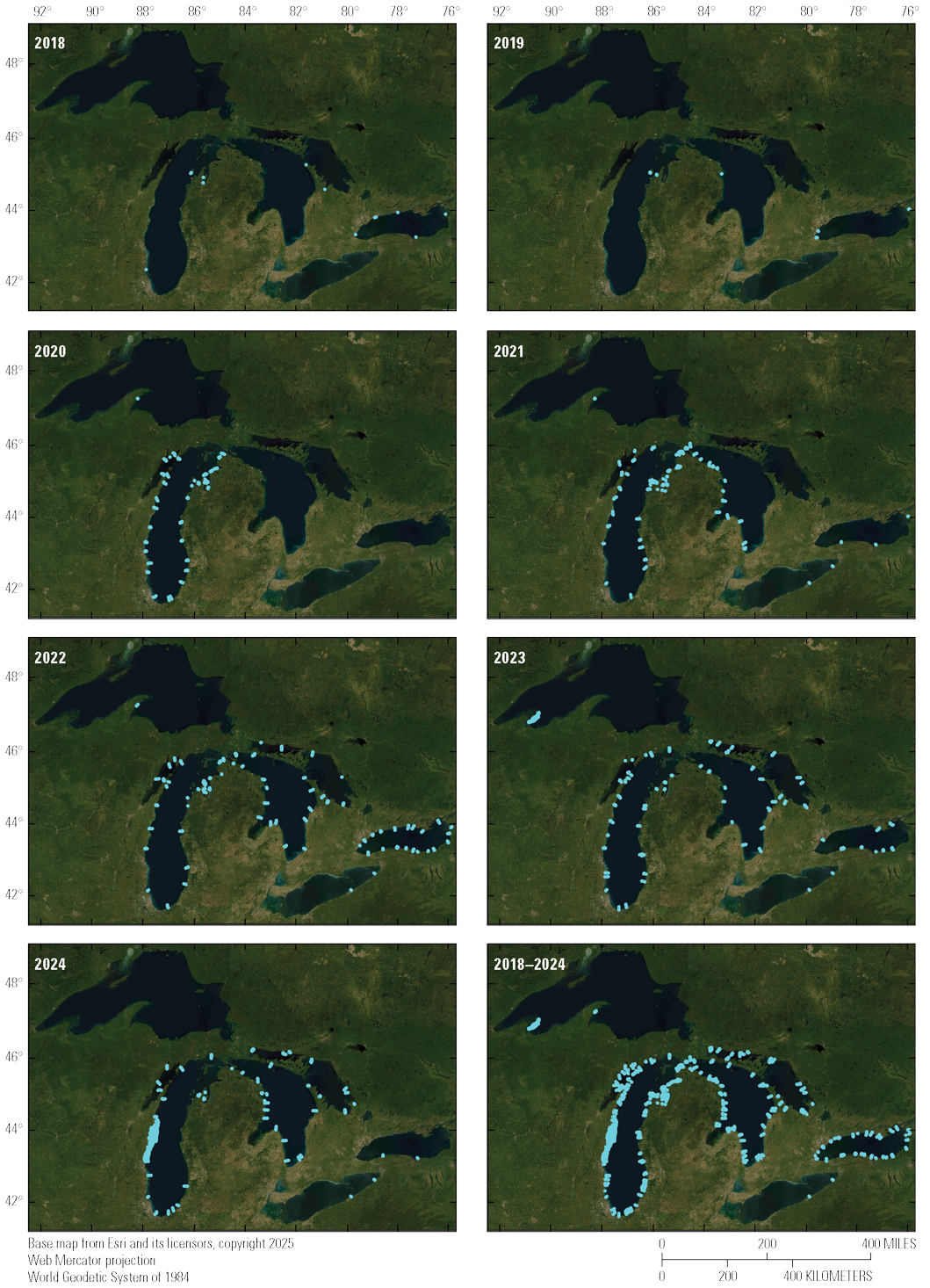

Data Processing

The ABISS camera systems have been used widely to collect lakebed imagery in the Great Lakes since 2018 (fig. 8). A single deployment of the Iver3 AUV carrying ABISS can cover more than 10 kilometers in less than 4 hours at 1.5 knots operating speed. ABISS1 recorded approximately 4.7 gigabytes of compressed data per minute, and ABISS2 recorded approximately 3.3 gigabytes of compressed data per minute. When operating at 1.5 knots, these collection rates translate to approximately 99.8 gigabytes per kilometer for ABISS1 and 72.1 gigabytes per kilometer for ABISS2. Between ABISS1 and ABISS2, more than 9.2 million images were recorded from the primary camera between 2020 and 2024 at locations throughout the Great Lakes (fig. 8). This volume of data underscores the need for efficient data management and processing.

Images showing locations (cyan dots) sampled with the Autonomous Benthic Imaging and Surveying System (ABISS) camera systems by year from 2018 to 2024.

Data recorded using ROS are saved in the .bag file format. These files can be read using the rosbag package (Open Robotics, 2023) through the command line or with its C++ and Python application programming interfaces (Open Robotics, 2021). The data are stored as messages with a timestamp for each topic recorded inside the .bag file. Image messages can be converted to OpenCV images using the cv_bridge package (Rabaud and others, 2022), which can then be saved to an image file using OpenCV (OpenCV, 2022). Telnet data from the front seat computer that are collected at different time intervals than image data can be matched to images by interpolating their values between timestamped observations and matching to image time stamps.

Applications

The ABISS system in its various integrations was originally intended to serve as a new method for quantifying a benthic invasive fish called round goby that has become an important source of prey for predator fishes in the Laurentian Great Lakes. Round goby on the lakebed range in size from approximately 15 to 270 mm, so high-resolution images were needed for this task. The utility of ABISS for assessing round gobies was initially proven by Esselman and others (2025). Round gobies are strongly affiliated with coarse geologic substrates during their reproductive season during summer, so ABISS images are also being used to characterize geologic substrate using machine learning (Geisz and others, 2024). The USGS is using ABISS data for other purposes including enumeration of invasive quagga and zebra mussels, quantifying benthic algae cover, and creating high-resolution 3D photogrammetric reconstructions of the lakebed.

The larger vision of the program is to use machine learning and computer vision approaches to rapidly characterize key biological and habitat end points of interest to the Great Lakes natural resource management community. To facilitate this vision, automated data production pipelines are being engineered for implementation on the USGS’s high-performance computers and (or) in the cloud.

Future Directions

The customization and deployment of AUVs for the purpose of scientific research in underwater environments, such as the Great Lakes, is becoming increasingly common. AUVs equipped with imaging and acoustic sensor payloads provide new capabilities for investigating and characterizing underwater habitats and species over extensive spatial scales but at high horizontal resolutions (for example, Smale and others [2012], and Rasmussen and others [2017]). AUVs equipped with cameras can enhance traditional sampling by offering direct visual evidence and locations of benthic environmental features, facilitating more efficient and accurate ecological characterization.

Although ABISS2 has improved upon the original ABISS1 system, additional improvements are possible. In addition to contributing to center and edge blur, the acrylic dome viewports used in ABISS2 are easily scratched and damaged. The acrylic dome viewports on ABISS2 will be replaced with flat sapphire viewports as funds allow. Having dome and flat viewports readily swappable for the same set of hardware would allow for a direct comparison of the effects on image quality and model performance. Both ABISS systems have had issues with image quality. The modifications implemented in ABISS2 resolved issues with overexposure in images, but introduced other issues with image clarity near the edges and in the center of images. Some of these issues will be resolved with the change to flat viewports mentioned above, whereas brightness issues may be improved with refinements to the primary camera settings of ABISS2, and potentially with the addition of brighter strobes.

While the intent of this publication was to describe the ABISS hardware and control systems, substantial work is needed to curate and analyze the data that ABISS collects. Given the massive data volumes arising from the collection of high-resolution images and point clouds over large areas of benthic habitat, it is crucial to approach data stewardship and analysis with automation in mind. The USGS is currently developing a custom Python package to handle preprocessing tasks, and deep learning algorithms are in production to address specific image analysis challenges such as geologic substrate classification (Geisz and others, 2024), round goby size and density estimation (Esselman and others, 2025), and other challenges (for example, geomorphic classification or invasive species identification). A containerized software pipeline is currently being engineered to implement image unpacking and usability determination in unison with pattern recognition software, and to write inferred values to a large database that can be queried for specific data needs.

An additional research area with high potential benefits is the creation of 3D products from photogrammetry with SfM or other approaches. Full color orthomosaics from SfM have potential to (1) condense information from many images to a more memory efficient format; (2) convert images with single point location information (latitude and longitude) into georeferenced raster surfaces; (3) support artificial intelligence inference on standard red, green, and blue data bands, and a fourth depth band; and (4) make data more portable and viewable on map servers. Future implementation of machine learning inference directly on 3D RGB orthomosaics may provide an analytical approach that is simultaneously highly visual, easy to map, and data efficient.

Continued progress in underwater imaging hardware and software will improve the ability of researchers to address complex ecological monitoring challenges with greater geographic specificity and less bias. These advancements have promise to contribute significantly to the efforts of regional management and decision-making bodies in the Great Lakes and beyond, as long as data and data products can be efficiently delivered to decision makers in formats and on timescales needed to support resource management.

Summary

Lake and seabed environments support fisheries and other biota that are important to ecosystems and economies, but these environments are difficult to assess using traditional benthic survey methods. Conventional approaches, such as pulling nets or deploying overboard instruments, are constrained by substrate type, have limited spatial resolution and precision, and are inefficient. These constraints reduce the ability to accurately assess benthic species and habitats. To address these limitations, the USGS developed the Autonomous Benthic Imaging and Surveying System (ABISS), a high-resolution underwater camera system designed to collect imagery of lakebed and seabed environments. The system integrates color monocular and stereo cameras into underwater vehicles to collect imagery that can be analyzed to detect organisms and characterize habitat features, including geologic substrate types. The ABISS has been deployed both in autonomous underwater vehicles (AUVs) and in an underwater housing operated by self-contained underwater breathing apparatus (SCUBA) divers.

Two generations of the system have been developed. The Autonomous Benthic Imaging and Surveying System version 1 (ABISS1) was engineered beginning in 2018 and successfully collected tens of thousands of interpretable images of Great Lakes benthic environments before being retired. ABISS1 incorporated a color primary camera, monochrome stereo cameras, onboard lighting, and custom software based on the Robot Operating System (ROS) running on a Linux operating system. The system was integrated into both a diver-operated dive camera and an AUV. Although ABISS1 was effective, it had limitations related to image exposure, insufficient light in dark conditions, camera asynchrony, and data collection efficiency.

In response to these limitations, the system was redesigned, resulting in Autonomous Benthic Imaging and Surveying System version 2 (ABISS2). ABISS2 incorporates higher-resolution color cameras, color stereo imaging, synchronized camera triggering, substantially brighter strobe lighting, improved camera orientation for wider swath coverage, and a photosynthetically active radiation (PAR) sensor for measuring photon flux. The redesigned system also includes an updated software architecture using the robot operating system (ROS) and a graphical user interface that improves usability and allows camera triggering to be constrained by altitude and other factors. These changes substantially reduced the prevalence of unusable images and improved overall data efficiency and quality.

Both versions of ABISS were designed to operate across a wide range of depths and light conditions, from shallow, brightly lit environments to deep waters below the photic zone. The systems were engineered to collect imagery at sufficient resolution to detect small benthic organisms, such as mussels and fish, and to support three-dimensional reconstruction of benthic habitats using structure-from-motion techniques. Integration with AUVs enabled georeferenced image collection over large spatial extents, while the dive camera allows flexible deployment in locations unsuitable for autonomous vehicles.

Standardized data collection procedures were developed for both diver-operated and AUV-based modes of deployment to optimize image quality, maintain consistent altitude and orientation, and ensure reliable georeferencing. Data volumes generated by ABISS are large, reflecting the high spatial resolution and extensive spatial coverage achieved, and necessitate efficient data handling and processing workflows based on ROS data formats.

Although the engineering and deployment of ABISS were motivated by the need to improve benthic monitoring in the Laurentian Great Lakes, the system is applicable to any aquatic environment with sufficient water clarity and safe operating conditions. By enabling high-resolution, spatially precise imaging of benthic environments, the Autonomous Benthic Imaging and Surveying System provides a powerful tool for mapping and quantifying ecological patterns that were previously difficult or impossible to assess using traditional methods. The system offers the potential for accurate and precise monitoring of native benthic biota, invasive species, and habitat, thereby providing natural resource managers with improved information to support decision making for benthic resource management.

References Cited

Aguzzi, J., Chatzievangelou, D., Robinson, N.J., Bahamon, N., Berry, A., Carreras, M., Company, J.B., Costa, C., del Rio Fernandez, J., Falahzadeh, A., Fifas, S., Flögel, S., Grinyó, J., Jónasson, J.P., Jonsson, P., Lordan, C., Lundy, M., Marini, S., Martinelli, M., Masmitja, I., Mirimin, L., Naseer, A., Navarro, J., Palomeras, N., Picardi, G., Silva, C., Stefanni, S., Vigo, M., Vila, Y., Weetman, A., and Doyle, J., 2022, Advancing fishery-independent stock assessments for the Norway lobster (Nephrops norvegicus) with new monitoring technologies: Frontiers in Marine Science, v. 9, article 969071, accessed December 31, 2025, at https://doi.org/10.3389/fmars.2022.969071.

Bohren, J., Quigley, M., Gerkey, B., Watts, K., and Gassend, B., 2017, ROS-kinetic-joy (ver. 1.11.0): GitHub, [Source code], accessed December 31, 2025, at https://github.com/ros-drivers/joystick_drivers/tree/1.11.0/joy.

Bohren, J., Quigley, M., Gerkey, B., Watts, K., and Gassend, B., 2021, ROS-noetic-joy (ver. 1.15.1): GitHub, [Source code], accessed December 31, 2025, at https://github.com/ros-drivers/joystick_drivers/tree/1.15.1/joy.

Bonin-Font, F., Martorell-Torres, A., Abadal, M.M., Muntaner-González, C., Nordfeldt-Fiol, B.M., González-Cid, Y., Oliver-Codina, G., Máñez-Crespo, J., Reynés, X., Pereda, L., Hernan, G., and Tomás, F., 2025, Measuring the temporal evolution of seagrass Posidonia oceanica coverage using autonomous marine robots and deep learning: Estuarine, Coastal and Shelf Science, v. 312, article 109029, accessed December 31, 2025, at https://doi.org/10.1016/j.ecss.2024.109029.

Bunnell, D.B., Ludsin, S.A., Knight, R.L., Rudstam, L.G., Williamson, C.E., Höök, T.O., Collingsworth, P.D., Lesht, B.M., Barbiero, R.P., Scofield, A.E., Rutherford, E.S., Gaynor, L., Vanderploeg, H.A., and Koops, M.A., 2021, Consequences of changing water clarity on the fish and fisheries of the Laurentian Great Lakes: Canadian Journal of Fisheries and Aquatic Sciences, v. 78, no. 10, p. 1524–1542, accessed December 31, 2025, at https://doi.org/10.1139/cjfas-2020-0376.

Chun, C.L., Ochsner, U., Byappanahalli, M.N., Whitman, R.L., Tepp, W.H., Lin, G., Johnson, E.A., Peller, J., and Sadowsky, M.J., 2013, Association of toxin-producing Clostridium botulinum with the macroalga Cladophora in the Great Lakes: Environmental Science & Technology, v. 47, no. 6, p. 2587–2594, accessed December 31, 2025, at https://doi.org/10.1021/es304743m.

Constantinoiu, L.F., Tavares, A., Cândido, R.M., and Rusu, E., 2024, Innovative maritime uncrewed systems and satellite solutions for shallow water bathymetric assessment: Inventions, v. 9, no. 1, 20, accessed December 31, 2025, at https://doi.org/10.3390/inventions9010020.

Debout, M., Grimm, M., Engelhard, N., Quilez, P., and Abdullah, S., 2022, Pylon-ros-camera (ver. 0.17.2): GitHub, [Source code], accessed December 31, 2025, at https://github.com/basler/pylon-ros-camera/tree/89cdc7f798eba5e0a11ba42fe9fef994bde8ea05.

Diamanti, E., and Ødegård, Ø., 2024, Visual sensing on marine robotics for the 3D documentation of Underwater Cultural Heritage—A review: Journal of Archaeological Science, v. 166, article 105985, accessed December 31, 2025, at https://doi.org/10.1016/j.jas.2024.105985.

Esselman, P.C., Moradi, S., Geisz, J., and Roussi, C., 2025, A transferable approach for quantifying benthic fish sizes and densities in annotated underwater images: Methods in Ecology and Evolution, v. 16, no. 1, p. 145–159, accessed December 31, 2025, at https://doi.org/10.1111/2041-210X.14453.

Ferraro, D.M., Trembanis, A.C., Miller, D.C., and Rudders, D.B., 2017, Estimates of sea scallop (Placopecten magellanicus) incidental mortality from photographic multiple before—After-control—Impact surveys: Journal of Shellfish Research, v. 36, no. 3, p. 615–626, accessed December 31, 2025, at https://doi.org/10.2983/035.036.0310.

Geisz, J.K., Wernette, P.A., and Esselman, P.C., 2024, Classification of lakebed geologic substrate in autonomously collected benthic imagery using machine learning: Remote Sensing (Basel), v. 16, no. 7, article 1264, accessed December 31, 2025, at https://doi.org/10.3390/rs16071264.

Handegard, N.O., De Robertis, A., Holmin, A.J., Johnsen, E., Lawrence, J., Le Bouffant, N., O’Driscoll, R., Peddie, D., Pedersen, G., Priou, P., Rogge, R., Samuelsen, M., and Demer, D.A., 2024, Uncrewed surface vehicles (USVs) as platforms for fisheries and plankton acoustics: ICES Journal of Marine Science, v. 81, no. 9, p. 1712–1723, accessed December 31, 2025, at https://doi.org/10.1093/icesjms/fsae130.

Happel, A., Jonas, J.L., McKenna, P.R., Rinchard, J., He, J.X., and Czesny, S., 2018, Spatial variability of lake trout diets in Lakes Huron and Michigan revealed by stomach content and fatty acid profiles: Canadian Journal of Fisheries and Aquatic Sciences, v. 75, no. 1, p. 95–105, accessed December 31, 2025, at https://doi.org/10.1139/cjfas-2016-0202.

ISO, 2017, ISO/IEC 14882:2017: ISO, Programming language C++, [Standard], accessed December 31, 2025, at https://www.iso.org/standard/70918.html.

Karatayev, A.Y., and Burlakova, L.E., 2025, Dreissena in the Great Lakes—What have we learned in 30 years of invasion: Hydrobiologia, v. 852, no. 5, p. 1103–1130, accessed December 31, 2025, at https://doi.org/10.1007/s10750-022-04990-x.

Luo, M.K., Madenjian, C.P., Diana, J.S., Kornis, M.S., and Bronte, C.R., 2019, Shifting diets of lake trout in northeastern Lake Michigan: North American Journal of Fisheries Management, v. 39, no. 4, p. 793–806, accessed December 31, 2025, at https://doi.org/10.1002/nafm.10318.

Massot, M., 2018, Avt_vimba_camera (commit 47e83f9, kinetic branch): GitHub, [Source code], accessed December 31, 2025, at https://github.com/srv/avt_vimba_camera/tree/47e83f9cb9c7d88516b997abf92dc69e3e02bc6f.

OpenCV, 2022, OpenCV for Python (ver. 4.6.0.66): OpenCV, [Software], accessed December 31, 2025, at https://opencv.org/.

Open Robotics, 2016, ROS Kinetic Kame: Wiki.ROS.org website, [Software distribution], accessed December 31, 2025, at https://wiki.ros.org/kinetic.

Open Robotics, 2020, ROS Noetic Ninjemys: Wiki.ROS.org website, [Software distribution], accessed December 31, 2025, at https://wiki.ros.org/noetic.

Open Robotics, 2021, Rosbag Code API: Wiki.ROS.org website, [Documentation], accessed December 31, 2025, at https://wiki.ros.org/rosbag/Code%20API.

Open Robotics, 2023, Rosbag (ver. 1.16.0): GitHub, [Source code], accessed December 31, 2025, at https://github.com/ros/ros_comm/tree/1.16.0/tools/rosbag.

Pérez-Fuentetaja, A., Clapsadl, M.D., Getchell, R.G., Bowser, P.R., and Lee, W.T., 2011, Clostridium botulinum type E in Lake Erie—Inter-annual differences and role of benthic invertebrates: Journal of Great Lakes Research, v. 37, no. 2, p. 238–244, accessed December 31, 2025, at https://doi.org/10.1016/j.jglr.2011.03.013.

Pothoven, S.A., and Madenjian, C.P., 2013, Increased piscivory by lake whitefish in Lake Huron: North American Journal of Fisheries Management, v. 33, no. 6, p. 1194–1202, accessed December 31, 2025, at https://doi.org/10.1080/02755947.2013.839973.

Python Software Foundation, 2023, Python (ver. 3.x): Python Software Foundation, [Programming language], accessed December 31, 2025, at https://www.python.org/.

Rabaud, V., Mihelich, P., and Bowman, J., 2022, cv_bridge (ver. 1.16.2): GitHub, [Source code], accessed December 31, 2025, at https://github.com/ros-perception/vision_opencv/tree/1.16.2/cv_bridge.

Roseman, E.F., Schaeffer, J.S., Bright, E., and Fielder, D.G., 2014, Angler-caught piscivore diets reflect fish community changes in Lake Huron: Transactions of the American Fisheries Society, v. 143, no. 6, p. 1419–1433, accessed December 31, 2025, at https://doi.org/10.1080/00028487.2014.945659.

Schauwecker, K., 2018, nerian_stereo (ver. 2.2.0): GitHub, [Source code], accessed December 31, 2025, https://github.com/nerian-vision/nerian_stereo/tree/2.2.0.

Schauwecker, K., and Torky, R.Y., 2023, nerian_stereo (ver. 3.11.0): GitHub, [Source code], accessed December 31, 2025, at https://github.com/nerian-vision/nerian_stereo/tree/3.11.0.

Smale, D.A., Kendrick, G.A., Harvey, E.S., Langlois, T.J., Hovey, R.K., Van Niel, K.P., Waddington, K.I., Bellchambers, L.M., Pember, M.B., Babcock, R.C., Vanderklift, M.A., Thomson, D.P., Jakuba, M.V., Pizarro, O., and Williams, S.B., 2012, Regional-scale benthic monitoring for ecosystem-based fisheries management (EBFM) using an autonomous underwater vehicle (AUV): ICES Journal of Marine Science, v. 69, no. 6, p. 1108–1118, accessed December 31, 2025, at https://doi.org/10.1093/icesjms/fss082.

Staton, J.M., Roswell, C.R., Fielder, D.G., Thomas, M.V., Pothoven, S.A., and Höök, T.O., 2014, Condition and diet of yellow perch in Saginaw Bay, Lake Huron (1970–2011): Journal of Great Lakes Research, v. 40, no. 1, p. 139–147, accessed December 31, 2025, at https://doi.org/10.1016/j.jglr.2014.02.021.

Ubuntu, 2020, Ubuntu 16.04.7 LTS (Xenial Xerus): Ubuntu releases, [Operating system], accessed December 31, 2025, at https://releases.ubuntu.com/16.04/.

Ubuntu, 2023, Ubuntu 20.04.6 LTS (Focal Fossa): Ubuntu releases, [Operating system], accessed December 31, 2025, at https://releases.ubuntu.com/20.04/.

Ussler, W., III, Doucette, G.J., Preston, C.M., Weinstock, C., Allaf, N., Roman, B., Jensen, S., Yamahara, K., Lingerfelt, L.A., Mikulski, C.M., Hobson, B.W., Kieft, B., Raanan, B.-Y., Zhang, Y., Errera, R.M., Ruberg, S.A., Den Uyl, P.A., Goodwin, K.D., Soelberg, S.D., Furlong, C.E., Birch, J.M., and Scholin, C.A., 2024, Underway measurement of cyanobacterial microcystins using a surface plasmon resonance sensor on an autonomous underwater vehicle: Limnology and Oceanography Methods, v. 22, no. 9, p. 681–699, accessed December 31, 2025, at https://doi.org/10.1002/lom3.10627.

Xie, Y., Bore, N., and Folkesson, J., 2022, Bathymetric reconstruction from sidescan sonar with deep neural networks: IEEE Journal of Oceanic Engineering, v. 48, no. 2, p. 372–383, accessed December 31, 2025, at https://doi.org/10.1109/JOE.2022.3220330.

Yoctopuce Sarl, 2022, yoctolib (commit 4433eb4): GitHub, [Source code], accessed December 31, 2025, at https://github.com/yoctopuce/yoctolib_python/tree/4433eb42a162273d7fce44be53311e80aae93466.

Abbreviations

3D

three-dimensional

ABISS

Autonomous Benthic Imaging and Surveying System

AUV

autonomous underwater vehicle

CPU

central processing unit

fps

frame per second

GPS

Global Positioning System

IMU

inertial measurement unit

INS

inertial navigation system

OS

operating system

PAR

photosynthetically active radiation

ROS

Robot Operating System

SCUBA

self-contained underwater breathing apparatus

SfM

structure from motion

SSD

solid-state drive

USB

Universal Serial Bus

USGS

U.S. Geological Survey

For more information about this publication, contact:

Director, USGS Great Lakes Science Center

1451 Green Road

Ann Arbor, MI 48105

734-994–8780

For additional information, visit: https://www.usgs.gov/centers/great-lakes-science-center

Publishing support provided by the

USGS Science Publishing Network,

Rolla Publishing Service Center

Disclaimers

Any use of trade, firm, or product names is for descriptive purposes only and does not imply endorsement by the U.S. Government.

Although this information product, for the most part, is in the public domain, it also may contain copyrighted materials as noted in the text. Permission to reproduce copyrighted items must be secured from the copyright owner.

Suggested Citation

Tilley, A.T., Esselman, P.C., Roussi, C., Hart, B., Lyons, A., Arnold, A.J., Childress, J., and Weller, C., 2026, Design and function of the Autonomous Benthic Imaging and Surveying System (ABISS) for remote sensing of lake and seabed environments: U.S. Geological Survey Techniques and Methods, book 8, chap. D3, 18 p., https://doi.org/10.3133/tm8D3.

ISSN: 2328-7055 (online)

Study Area

| Publication type | Report |

|---|---|

| Publication Subtype | USGS Numbered Series |

| Title | Design and function of the Autonomous Benthic Imaging and Surveying System (ABISS) for remote sensing of lake and seabed environments |

| Series title | Techniques and Methods |

| Series number | 8-D3 |

| DOI | 10.3133/tm8D3 |

| Publication Date | February 23, 2026 |

| Year Published | 2026 |

| Language | English |

| Publisher | U.S. Geological Survey |

| Publisher location | Reston, VA |

| Contributing office(s) | Great Lakes Science Center |

| Description | vii, 18 p. |

| Country | Canada, United States |

| Other Geospatial | Great Lakes |

| Online Only (Y/N) | Y |

| Additional Online Files (Y/N) | N |